I've been building and running agents for the past few months now, and watching this space evolve has been nothing short of wild. One week, we're celebrating agents that can write decent code. The next, they're autonomously debugging complex systems and coordinating with other agents to ship entire features.

Here's Jaana Dogan, a Google Engineer, saying Claude Code rebuilt their system in an hour:

The pace is relentless. Just when you think you've got a handle on what's possible, someone drops a new model, a new framework, or a completely different way of thinking about agent architecture. It's exhausting to keep up sometimes, but it's also incredibly exciting.

Take OpenClaw (ClawdBot/MoltBot — see our complete setup guide), for example. When it went viral in January 2026, it wasn't just another AI demo. It was a glimpse into a future where personal AI agents are as common as smartphones, helping with everything from scheduling to research to shopping. The virality wasn't about the tech being perfect. It was about people finally seeing what's possible when agents have the right tools and permissions to act on your behalf.

That's what this article is about: the trends that are making agents more capable, more accessible, and more integrated into how we build software and run businesses. These aren't predictions pulled from thin air. They're grounded in data from Anthropic's 2026 Agentic Coding Trends Report, industry research from firms like EY and IBM, and real conversations happening on X, Reddit, and Hacker News.

Let's dig in.

TL;DR

What is agentic AI? Agentic AI refers to AI systems that can autonomously pursue goals, make decisions, use tools, and take actions with minimal human supervision - unlike traditional AI that simply responds to prompts.

| Trend | What it means | Why it matters |

|---|---|---|

| CLI Agents | Command-line AI assistants are replacing traditional IDEs | Developers report 30% faster code shipping with CLI-based agents |

| Multi-Agent Systems | Single agents evolving into coordinated teams | Fountain achieved 50% faster screening using hierarchical multi-agent orchestration |

| Agentic Commerce | AI agents making purchases on your behalf | 20% of e-commerce tasks expected to be handled by agents in 2025; 800M+ active OpenAI users |

| AI Governance | Regulations like EU AI Act taking effect | 33% of enterprise software will feature agentic AI by 2028 (Gartner) |

| Personal AI Assistants | AI assistants becoming mainstream | clawd.bot's viral moment signals shift from novelty to necessity |

| Context Engineering | Focus shifting from prompts to context | Claude Opus 4.6's 1M token context window changes how we architect systems |

| Vertical AI Agents | Industry-specific agents outperforming general-purpose ones | Healthcare, legal, finance seeing 40%+ efficiency gains |

| Small Language Models | Edge AI and efficient models gaining traction | SLMs like Phi-4 matching larger models at 10x lower cost |

| Recursive Language Models | Models with native reasoning and self-refinement capabilities | OpenAI o1, DeepSeek R1 showing 2-3x improvement on complex reasoning tasks |

| Live Web Data Access | Real-time data becoming essential for agents | Agents without fresh data hallucinate 35% more frequently |

| Browser Agents | AI automating web-based workflows | Browser automation market expected to grow 45% YoY |

What are the top 11 agentic AI trends to watch in 2026?

1. CLI agents are taking over development

Command-line AI agents are fundamentally changing how developers write code. Tools like Claude Code, Cursor's composer, Continue.dev, and Windsurf are shifting development from clicking through IDEs to conversational interfaces in the terminal.

The paradigm shift is about delegation over suggestion. IDE-based AI tools live in sidebars and primarily offer code suggestions that require human approval for every change. CLI agents run autonomously for hours, coordinating changes across dozens of files, executing shell commands to verify their work, and committing results with descriptive messages. The difference: you pair program with an IDE assistant, but you delegate to a CLI agent.

The numbers tell the story. According to Anthropic's 2026 report, engineers using agentic coding tools report a net decrease in time spent per task but a much larger net increase in output volume. At TELUS, teams using Claude Code shipped engineering code 30% faster while saving over 500,000 hours, averaging 40 minutes saved per AI interaction.

Why terminals work better for autonomous agents:

| Advantage | CLI agents | IDE agents |

|---|---|---|

| Context management | Treat context as a scarce resource. Run grep, get filtered results, load only what's needed. The filesystem is the only state-a file is written or it isn't, a test passes or fails. This binary nature reduces hallucination. | Load schemas for every available tool, open files, and conversation history into context windows, causing "context pollution" that degrades performance over time. |

| Feedback loops | Atomic operations with exit codes (0 = success, >0 = error). If a test fails with exit code 1, the agent reads stderr, plans a correction, and retries-all without human intervention. | Require visual inspection for every change. Human must manually verify and provide feedback. |

| Composability | Text-native. Unix philosophy treats code, logs, and errors as uniform text streams-the native language of LLMs. Can pipe commands together (find TypeScript files → filter imports → run sed) without special API integration. | GUI-based. Need special API integration for each operation. Cannot easily compose operations. |

But it's not just about speed. CLI agents handle the full software development lifecycle-writing tests, debugging failures, generating documentation, and navigating increasingly complex codebases. At Rakuten, engineers tested Claude Code with a complex technical task: implementing a specific activation vector extraction method in vLLM, a massive open-source library with 12.5 million lines of code across multiple languages. Claude Code finished the entire job in seven hours of autonomous work with 99.9% numerical accuracy.

The shift is profound: software development is moving from an activity centered on writing code to one grounded in orchestrating agents that write code. Engineers who master this orchestration can shepherd multiple features through development simultaneously, applying their judgment across a broader scope than individual implementation previously allowed.

What developers are saying matters here too. Multiple discussions on Hacker News and Reddit show developers abandoning "AI-enhanced IDEs" for terminal-based agents. The consensus is clear: CLI agents aren't replacing developers-they're making experienced developers more effective and lowering the barrier for junior developers to contribute meaningfully.

This shift is broader than IDE vs. terminal preference. More and more teams are moving away from MCPs entirely, opting for direct API calls and CLIs instead. The overhead of MCP setup, schema loading, and context cost doesn't always justify the convenience, especially when you're running production pipelines where token efficiency matters. The MCP token cost difference is significant in practice—benchmarks show CLI averaging 200 tokens per command versus 32,000–82,000 tokens for the equivalent MCP operation.

Read more: Why CLIs are better for AI coding agents than IDEs

2. Multi-agent systems are becoming the standard

Single-agent workflows are giving way to coordinated teams of specialized agents working in parallel. This shift addresses a fundamental limitation: complex tasks exceed what any single agent context window can handle effectively. The software infrastructure that coordinates these agents — managing tool execution, memory, and state persistence across sessions — is what practitioners now call an agent harness.

Anthropic's report highlights this as a foundation trend: "Organizations in 2026 will be able to harness multiple agents acting together to handle task complexity that was difficult to imagine just a year ago."

The architecture is straightforward: an orchestrator agent coordinates specialized sub-agents, each with dedicated context, working in parallel. The results are impressive. Fountain achieved 50% faster screening, 40% quicker onboarding, and 2x candidate conversions using hierarchical multi-agent orchestration-cutting one customer's staffing time from weeks to less than 72 hours.

At Zapier, the company deployed 800+ AI agents internally with 89% AI adoption across the entire organization. Design teams use Claude artifacts to rapidly prototype during customer interviews, showing design concepts in real-time that would normally take weeks to develop.

3. Agentic commerce is reshaping online shopping

The way we shop online is fundamentally changing. Instead of searching, comparing, and manually checking out, consumers are delegating product discovery and purchases to AI agents. The shift is happening faster than anyone predicted.

The numbers are staggering: 800 million active OpenAI users as of April 2025-doubling in just weeks from February to April. 39% of U.S. consumers have already used AI for online shopping, and 53% plan to in 2025. By year-end, agents are expected to handle 20% of e-commerce tasks-potentially hundreds of billions of dollars in transactions.

But there's a problem: existing payment infrastructure wasn't designed for autonomous agents making purchases on your behalf.

Mastercard Agent Pay addresses this gap. The system uses tokenization technology to create unique Agentic Tokens that tie AI agents to individual users while safeguarding payment credentials. The infrastructure is being built with Microsoft, PayPal, Block, IBM, and Adyen to ensure user intent is verified and central to every transaction.

4. AI governance and regulations are accelerating

The regulatory landscape is shifting from discussions to enforcement. The EU AI Act is the most comprehensive framework so far, establishing risk-based obligations for AI systems operating in the EU.

According to Gartner research cited in EY's report, 33% of enterprise software will feature agentic AI by 2028. But there's a catch: 25% of enterprise cybersecurity incidents will be due to the misuse of AI agents by both external attackers and internal threats. Isolating agent execution inside an AI agent sandbox is the primary technical control for limiting blast radius when an agent is compromised or misbehaves.

The regulation impact:

- Transparency requirements: AI systems must disclose when users are interacting with agents

- Data governance: Stricter rules on training data provenance and usage

- Accountability frameworks: Clear lines of responsibility when agents make decisions

- Risk assessments: Mandatory evaluations for high-risk AI applications

Beyond the EU, we're seeing movement in the U.S. with executive orders on AI safety, China's regulations on generative AI, and industry-specific frameworks in healthcare and finance.

5. Personal AI assistants are going mainstream

Personal AI agents are moving from tech demos to daily tools. The shift accelerated dramatically with clawd.bot's viral moment in January 2026, showing what happens when an AI assistant is given the right permissions and tools to act on your behalf. See our OpenClaw + Firecrawl guide for a hands-on walkthrough of building and deploying your own, or browse the best OpenClaw skills to extend what your agent can do out of the box. To pick the right search backend for your OpenClaw agent, see our comparison of the best OpenClaw search providers.

What's changed? Earlier virtual assistants were limited by rigid programming. Modern personal agents leverage large language models with expanded context windows, protocol standardization (MCP/A2A), and improved reasoning capabilities. They can understand nuanced requests, maintain context across conversations, and integrate with multiple services. Building a real-time voice assistant that searches the web and manages your inbox through spoken conversation is now a single Python file with Gemini Live and Firecrawl.

For instance, I have a few personal agents running: one tracks my spending and flags budget issues every week, another hunts for thrift deals online monthly, one manages my meeting schedule and automatically reschedules conflicts, and one that pre-screens my email and drafts responses for repetitive asks. Nothing revolutionary-just automating the stuff I'd rather not do manually....like it's 2025 (haha).

6. Context engineering is replacing prompt engineering

The real skill isn't writing prompts anymore-it's curating what fills the context window. Models like Claude Opus 4.6 with 1 million token context windows seem like they solved the problem: just dump everything in. But research proves otherwise.

Context rot is real. Studies show LLM performance degrades as context grows, even on simple tasks. A Databricks study found model correctness drops around 32,000 tokens-long before you hit million-token limits. Models struggle with the "lost in the middle" phenomenon: information buried mid-context gets ignored, regardless of relevance.

Context fails in four ways:

- Poisoning: A hallucination enters context and gets repeatedly referenced — a direct consequence of AI agents using outdated facts instead of live web data

- Distraction: Context grows so large the model over-focuses on history, not training knowledge

- Confusion: Irrelevant content influences responses (models perform worse with more tools available)

- Clash: Different parts of context directly contradict each other

The solution isn't bigger windows-it's better curation. Context engineering means finding the minimum set of high-signal tokens. Structure matters as much as content: place critical information at the beginning or end, never the middle. Use just-in-time retrieval instead of pre-loading everything. Separate static context (coding standards, API specs) from dynamic context (current state, real-time data) to enable prompt caching.

7. Vertical AI agents are outperforming general-purpose ones

Industry-specific agents trained on domain knowledge are showing significantly better results than general-purpose assistants adapted to specific fields.

EY's research highlights applications across sectors:

Healthcare: Agentic AI is shifting from pattern recognition to active involvement in diagnosis, treatment planning, and patient monitoring. It monitors real-time vitals, triggers alerts, suggests interventions, assists in referrals, and helps researchers explore drug options and manage clinical trial steps.

Manufacturing: Moving beyond predictive maintenance to sustainable operations by acting on data without human input. Agentic AI detects faults, decides next steps, initiates repairs or production shifts, adapts workflows in real time, manages inventory, and improves sustainability through smarter resource use.

Financial Services: Real-time fraud mitigation, credit risk evaluation, and customer service routing. It notices issues, temporarily stops suspicious payments, and adjusts investment portfolios based on market changes. The concern: oversight, trust, and accountability in autonomous financial decisions.

FMCG: Helps companies adapt to changing trends by autonomously managing stock, detecting sales fraud, and ensuring safety compliance through multi-agent systems.

The performance gap: vertical agents typically show 40%+ efficiency gains over general-purpose agents adapted to the same domain. The reason: they understand domain-specific terminology, regulatory requirements, common workflows, and edge cases that general models miss.

8. Small language models are proving enterprise-ready

SLMs (Small Language Models) are gaining traction as organizations realize bigger isn't always better. Models like Microsoft's Phi-4, Google's Gemini Nano, Meta's Llama 3.2, Hugging Face's SmolLM, and Alibaba's Qwen2.5 are demonstrating that careful training and architecture choices can rival much larger models on specific tasks.

Recent research validates this shift. A comprehensive survey on SLMs for agentic systems (October 2025) found that small language models (1-12B parameters) are sufficient and often superior for agentic workloads where objectives are schema- and API-constrained. The paper analyzes late-2025 models like Phi-4-Mini, Qwen-2.5-7B, and Llama-3.2-3B, focusing on tool use reliability and strict data schema adherence.

NVIDIA's position paper (June 2025) argues that serving a 7B SLM is 10-30x cheaper in latency, energy consumption, and FLOPs than a 70-175B LLM. Microsoft's Phi-4 (14B parameters) achieves 88.0% on MMLU-surpassing GPT-3.5 (175B)-while consuming 92% less energy per inference.

The quality of training data appears as crucial as quantity. Models like Phi-1 achieve strong results with carefully curated datasets. Google's Gemini Nano models (Nano-1 with 1.8B parameters, Nano-2 with 3.25B) exhibit exceptional performance in factuality, retrieval, reasoning, STEM, coding, and multimodal tasks.

The economics matter: SLMs require 10x less compute for inference, can run on-device without API calls, offer faster response times (critical for interactive applications), and enable data privacy (no data leaving your infrastructure).

IBM Granite models, for example, achieve over 90% cost savings compared to larger alternatives. These smaller, open models are designed for developer efficiency and deliver exceptional performance across enterprise tasks from cybersecurity to RAG applications.

Market projection: The SLM market is expected to grow from $0.93 billion in 2025 to $5.45 billion by 2032 (CAGR 28.7%). Gartner predicts that by 2027, organizations will implement small, task-specific AI models with usage volume at least three times more than general-purpose LLMs.

The catch: SLMs aren't universal replacements. They excel at focused tasks but struggle with broad knowledge requirements or complex reasoning that larger models handle. The skill: knowing when an SLM is sufficient vs. when you need a frontier model's capabilities.

9. Recursive Language Models (RLMs) are breaking context limits

The biggest constraint in building agents has been context windows. Even with million-token limits, models struggle with long prompts. Recursive Language Models (RLMs) solve this by treating long prompts as external environments that models can programmatically examine, decompose, and recursively call themselves over.

Instead of processing the entire prompt at once, RLMs break it into snippets, process each piece recursively, and synthesize results. The model can call itself like a function, examining different parts of the input as needed. This enables processing inputs up to two orders of magnitude beyond context windows-without hitting the context rot issues traditional long-context models face.

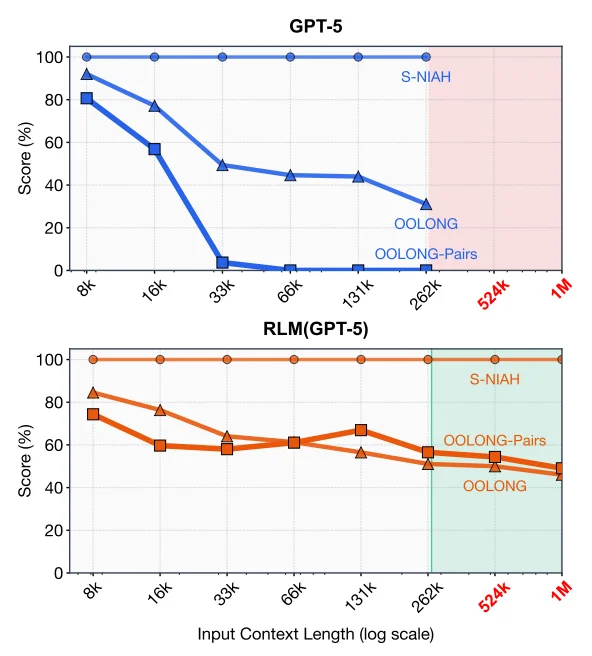

The results are striking. MIT researchers built RLM-Qwen3-8B, the first natively recursive language model, which outperforms the underlying Qwen3-8B model by 28.3% on average across long-context tasks. Even more impressive: it approaches vanilla GPT-5 quality on three tasks, despite being 50x smaller. For shorter prompts, RLMs dramatically outperform vanilla frontier models and common long-context scaffolds at comparable cost.

Here's a comparison of GPT-5 and a corresponding RLM using GPT-5 on three long-context tasks of increasing complexity:

The breakthrough here isn't just handling longer inputs. It's making models better at using context. Traditional models suffer from "lost in the middle" problems where they ignore information buried in long prompts. RLMs actively navigate to relevant information, process it in focused chunks, and synthesize findings-more like how humans work through complex documents.

What this enables:

- Agents that process entire codebases: Not just referencing files, but systematically analyzing dependencies across thousands of files

- Long-document reasoning: Processing legal contracts, research papers, or technical specifications that exceed context limits

- Multi-stage problem decomposition: Breaking complex reasoning into recursive sub-problems

- Better context utilization: Higher accuracy on long-context tasks compared to vanilla models with larger windows

10. Live web data access is becoming essential

LLMs are trained on static datasets with knowledge cutoffs. For agents making real-world decisions, stale data is a liability.

The problem: agents without access to fresh data hallucinate significantly more — around 35% higher hallucination rates on tasks requiring current information. Whether it's checking product availability, pulling latest documentation, or verifying real-time prices, agents need live web search APIs and data access. For a framework on when each data source applies — training data, RAG, or live web data — see the dedicated breakdown.

For web data specifically, tools like Firecrawl handle the complexity of modern websites-JavaScript rendering, dynamic content loading. The output is clean, structured data ready for AI consumption.

Practical examples:

- E-commerce agents: Checking current inventory and pricing before purchases

- Research agents: Pulling latest papers, news, and discussions

- Support agents: Accessing up-to-date documentation and known issues

- Financial agents: Real-time market data and news for trading decisions

11. Browser agents are automating complex workflows

Browser automation has existed for years, but AI agents are making it dramatically more accessible and capable. Instead of writing brittle Selenium scripts that break when websites change, agents can navigate interfaces visually, understand context, and adapt to layout changes. For a comprehensive overview of available tools, see our guide to the best browser agents.

Tools like Anthropic's Claude integration with Chrome, browser use libraries, and Playwright AI enable agents to:

- Fill out forms: Understanding field purposes from labels and context

- Navigate workflows: Following multi-step processes across pages

- Extract information: Scraping data while understanding page structure

- Interact with web apps: Clicking buttons, selecting options, uploading files

The browser automation market is expected to grow 45% year-over-year, driven largely by AI capabilities.

Real applications:

- Testing: Agents that can test web applications like users do

- Data entry: Automating repetitive form submissions across systems

- Research: Gathering information from multiple sources automatically

- Integration: Connecting systems that lack APIs by automating their UIs

What about enterprise AI adoption?

Enterprise AI adoption is accelerating, but the approach is pragmatic rather than revolutionary. According to EY research, major firms expect agentic AI to reshape enterprise decisions, operations, and automation by 2028.

Spotify built an internal tool called "Honk" that lets engineers deploy features in minutes by describing what they want in plain English through Slack. The system uses generative AI, including Claude Code, to handle remote code deployment in real time. The result? Their best developers haven't written a line of code since December-they're orchestrating AI agents instead.

(P.S: We built a similar tool called Spring at Firecrawl. Spring is our 'super agent' and is used across engineering, marketing, sales, support, and admin - from within Slack!).

Key statistics:

- Gartner predicts 33% of enterprise software will feature agentic AI by 2028

- 80% of customer service problems will be solved independently by 2029, saving 30% of total costs

- 15% of work choices will be overseen by agentic AI globally

- 40% of companies will rely on AI to guide employee behaviors by 2028

- 10% of global boards will seek AI guidance for key executive decisions by 2029

The enterprise approach:

- Start with clear ROI: Focus on high-value, well-defined tasks

- Build with oversight: Human-in-the-loop for high-stakes decisions

- Scale incrementally: Expand from pilots to production gradually

- Invest in training: Teams need to learn agent orchestration skills

- Establish governance: Security, compliance, and audit trails from day one

Organizations treating agentic AI as a strategic priority in 2026 will define what becomes possible. Those treating it as an incremental productivity tool will discover they're competing in a game with new rules.

Building your own agent? Make sure it has access to live web data

If you're building agents-whether for research, customer support, e-commerce, or any task requiring current information-web data access isn't optional. It's fundamental.

The good news: building agents is increasingly accessible. Tools like LangChain, LlamaIndex, CrewAI, and frameworks from major LLM providers make orchestration straightforward. You can have a basic agent running in an afternoon.

The challenge: most LLMs aren't great at web fetch. They're trained on static data, and their web access (when available) is often limited or unreliable. Modern websites with JavaScript rendering and complex authentication present real obstacles.

This is where Firecrawl becomes essential. It's built specifically for AI applications:

- /scrape endpoint: Transforms JavaScript-heavy pages into markdown or structured JSON suitable for embedding

- /extract endpoint: Converts semi-structured web data into consistent JSON outputs based on your schemas

- /crawl endpoint: Systematically explores websites to gather interconnected information

- /map endpoint: Discovers site structure for efficient navigation

- /agent endpoint: Autonomous web research agent that can navigate websites, follow links, and gather information based on natural language goals

For agents making real-world decisions, reliable web data access isn't a nice-to-have-it's table stakes. Whether you're building a research assistant that needs latest papers, a shopping agent comparing prices, or a support agent referencing current documentation, Firecrawl handles the messy reality of modern websites so your agents can focus on their core tasks. The Firecrawl Agent goes further by autonomously researching topics, following relevant links, and compiling comprehensive information without requiring you to specify exact URLs or crawl patterns. For teams that need a fully self-hostable stack—custom model providers, own infrastructure, domain-specific skill playbooks—the open-source firecrawl-agent project scaffolds a complete web research agent in two commands.

Get started with Firecrawl and build agents with the context they need to be genuinely useful.

The agent space is moving faster than most of us can keep up with, but that's exactly what makes it exciting. These eleven trends aren't exhaustive-they're snapshots of where we are right now and where we're likely heading in the next year.

If you're building in this space, the message is clear: the gap between early adopters and late movers is widening. The teams that figure out agent orchestration, embrace standardized protocols, and give their agents access to live data are shipping faster and building capabilities that weren't feasible six months ago.

Build with agents. Give them good tools. Make sure they have access to current data. And ship something.

Frequently Asked Questions

What are agentic AI systems?

Agentic AI systems are AI applications designed to autonomously make decisions and act with limited supervision. Unlike traditional AI that responds to prompts, agentic systems can pursue complex goals, break them into subtasks, use tools, and adapt their approach based on results. They combine LLMs with the ability to take actions in the real world.

Why is agentic AI growing so fast in 2026?

Several factors converged: improved reasoning capabilities in models, standardized protocols for tool integration (MCP/A2A), larger context windows reducing architectural complexity, and real-world success stories proving ROI. The Anthropic report documents how early adopters are achieving 30-50% efficiency gains, which drives adoption.

Are AI agents going to replace developers?

No. The shift is from writing code to orchestrating agents that write code. Developers who master agent orchestration become more effective, handling broader scope and shipping features faster. Junior developers can contribute more meaningfully earlier. But human judgment, architectural decisions, and strategic thinking remain essential.

What's the difference between agentic AI and generative AI?

Generative AI creates content (text, images, code) in response to prompts. Agentic AI takes actions autonomously toward goals. An LLM writing code when prompted is generative AI. An agent that can debug a system by running tests, reading logs, modifying code, and verifying fixes is agentic AI. Agentic systems use generative models as components but add planning, tool use, and autonomy.

How do I get started building AI agents?

Start simple: pick a well-defined task, choose a framework (LangChain, LlamaIndex, or vendor-specific tools), give your agent access to necessary tools, and implement human oversight for high-stakes decisions. Focus on tasks where you can easily verify correctness. Start with single agents before attempting multi-agent systems. And ensure your agents have access to live data sources-especially web data via tools like Firecrawl.

What are the security risks with AI agents?

Agents with tool access can take actions with real consequences. Key risks: unauthorized data access if permissions aren't properly scoped, prompt injection attacks manipulating agent behavior, cost runaway if agents make expensive API calls unchecked, and liability issues when agents make mistakes. Mitigation: strict permission boundaries, human approval for high-stakes actions, spend limits, comprehensive logging, and regular security audits.

Which agentic AI trend will have the biggest impact?

Multi-agent systems and access to live web data together have the highest multiplicative impact. Multi-agent systems unlock task complexity single agents can't handle. Live web data access ensures these agents can make decisions based on current information rather than stale training data, reducing hallucinations by 35% and enabling real-world applications like commerce, research, and support. The combination enables emergent capabilities we're only beginning to explore.