TL;DR

- Gemini CLI's built-in fetch tool fails on most pages that aren't plain developer docs. Protected sites return 403s, JavaScript-rendered pages come back empty.

- Firecrawl gives AI agents and apps fast, reliable web context with strong search, scraping, and interaction tools — it runs a real browser, returns clean markdown, and supports structured data extraction with JSON schemas.

- Two integration paths: Agent Skills (one install command, recommended) or MCP server (manual config, more control).

- Three working examples show scraping, structured extraction, and browser interaction on a page the built-in tool couldn't reach.

- A RAG workflow builds a local knowledge base from 18 PubMed Central papers and queries across them with Gemini CLI's 1M-token context window.

Most websites now recognize the request patterns of coding agents and reject them automatically.

Gemini CLI's built-in fetch tool gets caught in this all the time. Anything that isn't plain developer documentation tends to come back as a 403 or an empty response. And when a fetch fails, whatever you were trying to build on top of that data stalls with it.

Firecrawl solves this by running a real browser on its servers and returning clean markdown. This article covers how to wire it into Gemini CLI and put it to work, from single-page scrapes to building a local knowledge base from research papers.

What is Gemini CLI?

Gemini CLI is Google's open-source AI agent for the terminal.

It's Apache 2.0 licensed and the current stable version is v0.36.0 as of April 2026. Most developers picked it up for coding assistance, working through large codebases, and running shell tasks. The free tier is a big reason why: OAuth gets you 1,000 requests per day with Gemini 2.5 Pro and a 1M-token context window.

It also ships with two web-facing tools.

One for real-time Google Search and one for fetching URL content. Both are geared toward coding workflows, like checking library versions or reading docs that post-date the model's training cutoff. They work for quick lookups mid-session but weren't built for pulling data from the web in any serious way.

What is Firecrawl?

Firecrawl is a web search and scraping API for AI agents that returns markdown instead of raw HTML.

A typical webpage goes from hundreds of thousands of tokens as HTML to a few thousand as markdown, which directly cuts how much you spend on API calls. It runs Playwright (a browser automation tool) behind the scenes, so JavaScript-rendered pages actually load instead of coming back empty.

Beyond basic scraping, Firecrawl supports:

- Web search and get clean, structured content from every result in a single API call.

- Structured data extraction via the

/agentendpoint (research preview), using JSON schemas or natural language prompts - Site crawling that follows links across up to 10,000 pages

- URL mapping to discover every page on a domain

- Browser interaction for clicking, scrolling, and filling forms on live pages

It connects to Gemini CLI through Agent Skills or as an MCP server (a standard protocol that lets AI tools talk to external services).

Firecrawl web search

The /search endpoint is worth calling out separately because it does something standard search APIs don't: it runs the search and scrapes each result page in the same call.

A typical search API returns titles, snippets, and URLs. You still have to fetch each page yourself to get the actual content. With Firecrawl's /search, you get full markdown from every result alongside the metadata. For a Gemini CLI session, that means one tool call can return the content of the top 10 results, ready to pass directly to the model.

Key parameters:

- query: your search term

- limit: number of results to return

- sources:

web(default),news, orimages - categories: filter for

github,research, orpdfresults - tbs: time-based filtering, e.g.

qdr:dfor the past 24 hours - scrapeOptions: enable full-page content extraction alongside the search

Pricing: 2 credits per 10 results. Adding page scraping costs 1 credit per page on top of that.

In the RAG example below, firecrawl-search is what discovers the PubMed Central article URLs before they get scraped as markdown.

How do you set up Gemini CLI?

Prerequisites: Node.js 20.0.0 or higher.

Install with npm, npx, or Homebrew:

npm install -g @google/gemini-cli

# or

npx @google/gemini-cli

# or

brew install gemini-cliThen authenticate through your browser:

gemini auth loginOnce that's done, you're on the free tier with tools for file operations, shell commands, memory, and web access. The web tools are the weakest part though. Try fetching anything with a protection layer and you get an error.

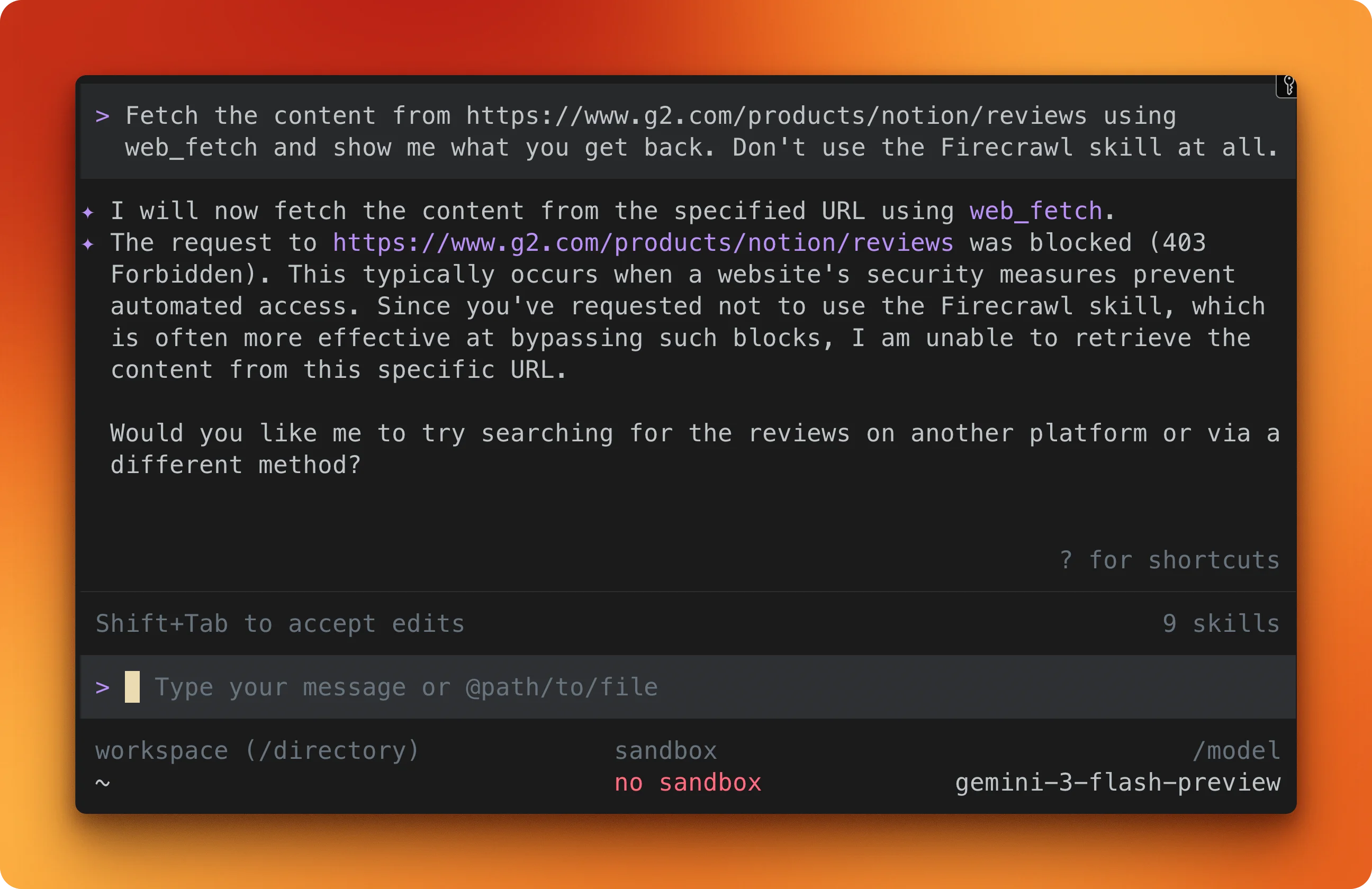

For example, you can tell Gemini CLI:

fetch the content from https://www.g2.com/products/notion/reviews using web_fetch and show me what you get back. Don't use the Firecrawl skill at all.You'll get a 403 error:

How to integrate Firecrawl in the Gemini CLI

Grab an API key by signing up at firecrawl.dev (the free tier gives you 1,000 credits per month) and set it as an environment variable:

export FIRECRAWL_API_KEY=your_key_hereAgent skills (recommended)

The quickest path. One command installs 8 Firecrawl skills into your system:

npx -y firecrawl-cli@latest init --all --browserThe skills land in ~/.agents/skills/ and Gemini CLI picks them up automatically:

| Skill | What it does |

|---|---|

| Scrape | Extracts a single page as clean markdown |

| Crawl | Follows links and scrapes multiple pages from a site |

| Map | Discovers all URLs on a domain |

| Agent | Searches and gathers structured data from the web using a natural language prompt |

| Search | Web search with optional full-page content |

| Batch Scrape | Scrapes multiple URLs in parallel |

| Interact | Controls a live browser session with prompts or code |

| Browser | Direct Playwright browser automation |

Gemini CLI figures out which skill to use based on what you ask it to do. Agent Skills use the same SKILL.md specification across Claude Code, OpenAI Codex CLI, and Cursor without modification — for a full breakdown of how the format works and why it's cross-platform portable, see how agent skills work.

For Codex specifically, Firecrawl can also be added via MCP as an alternative to the skill path — either approach gives Codex full-page scraping and web search capabilities that go beyond what Codex can fetch on its own, and fixes the stale cached search that Codex ships with by default.

For a curated list of the best Codex skills worth installing in your Codex workflow, see the best Codex skills guide.

MCP server (alternative)

If you prefer configuring tools manually, add this block to ~/.gemini/settings.json:

{

"mcpServers": {

"firecrawl-mcp": {

"command": "npx",

"args": ["-y", "firecrawl-mcp"],

"env": { "FIRECRAWL_API_KEY": "YOUR_KEY" }

}

}

}This exposes 12 Firecrawl tools inside your Gemini CLI session. The MCP server documentation lists all of them. If you've used MCP with other agents, the setup is nearly identical to Firecrawl's MCP integration in Cursor.

Working examples

The examples below cover three Firecrawl capabilities: scraping a page as markdown, pulling structured data with a JSON schema, and interacting with a live browser session. All three use https://www.g2.com/products/notion/reviews, the page behind G2's protection layer that returned a 403 when Gemini CLI's built-in fetch tool tried it earlier.

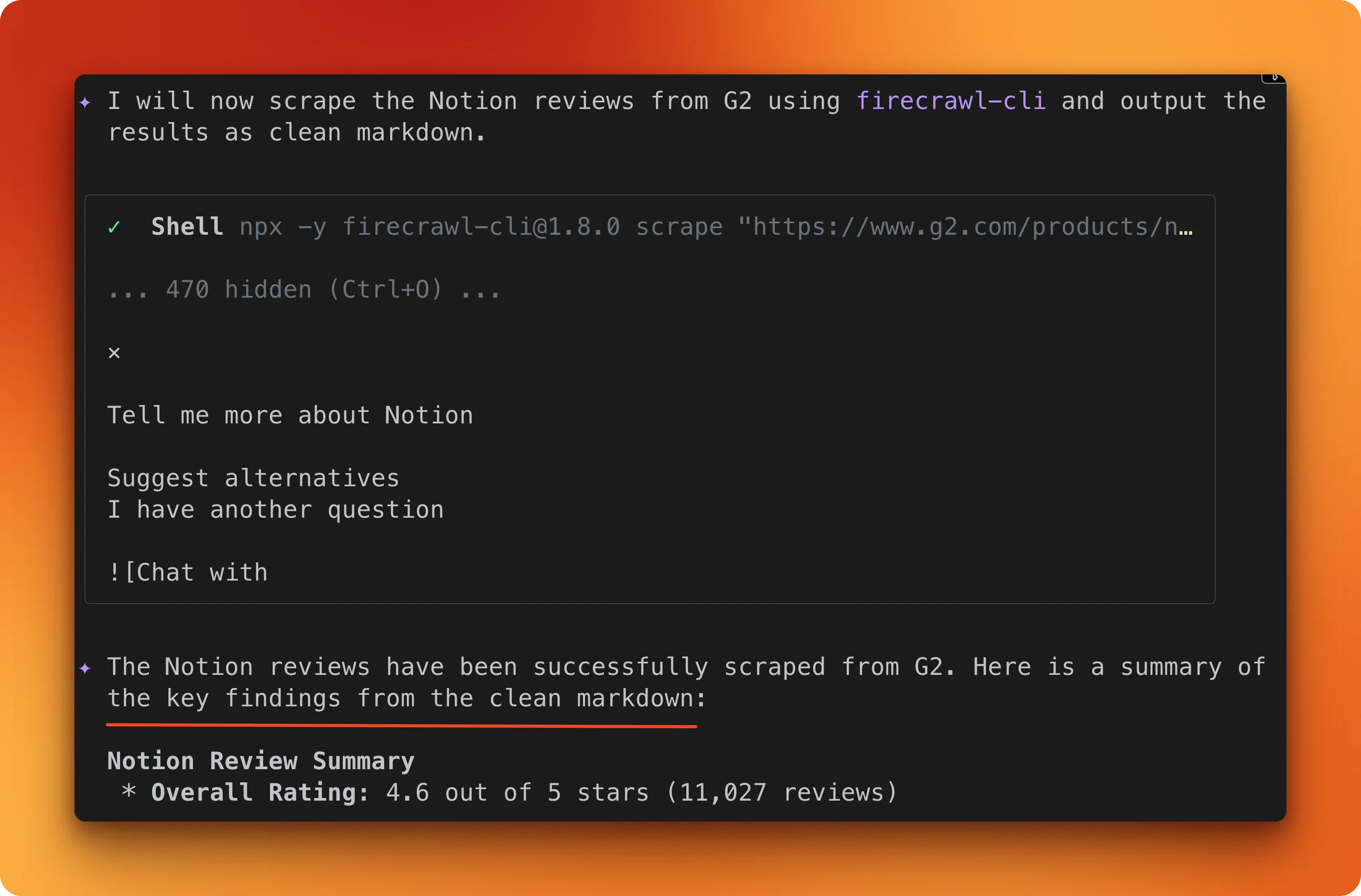

Scraping a page as markdown

Tell Gemini CLI to scrape the G2 Notion reviews page using the Firecrawl scrape skill.

Firecrawl opens a full browser, loads the page through its protection layers, waits for JavaScript to finish rendering, and converts the result to markdown. The output is Notion's review profile: 4.6 out of 5 stars across 11,027 reviews.

One /scrape call, one credit.

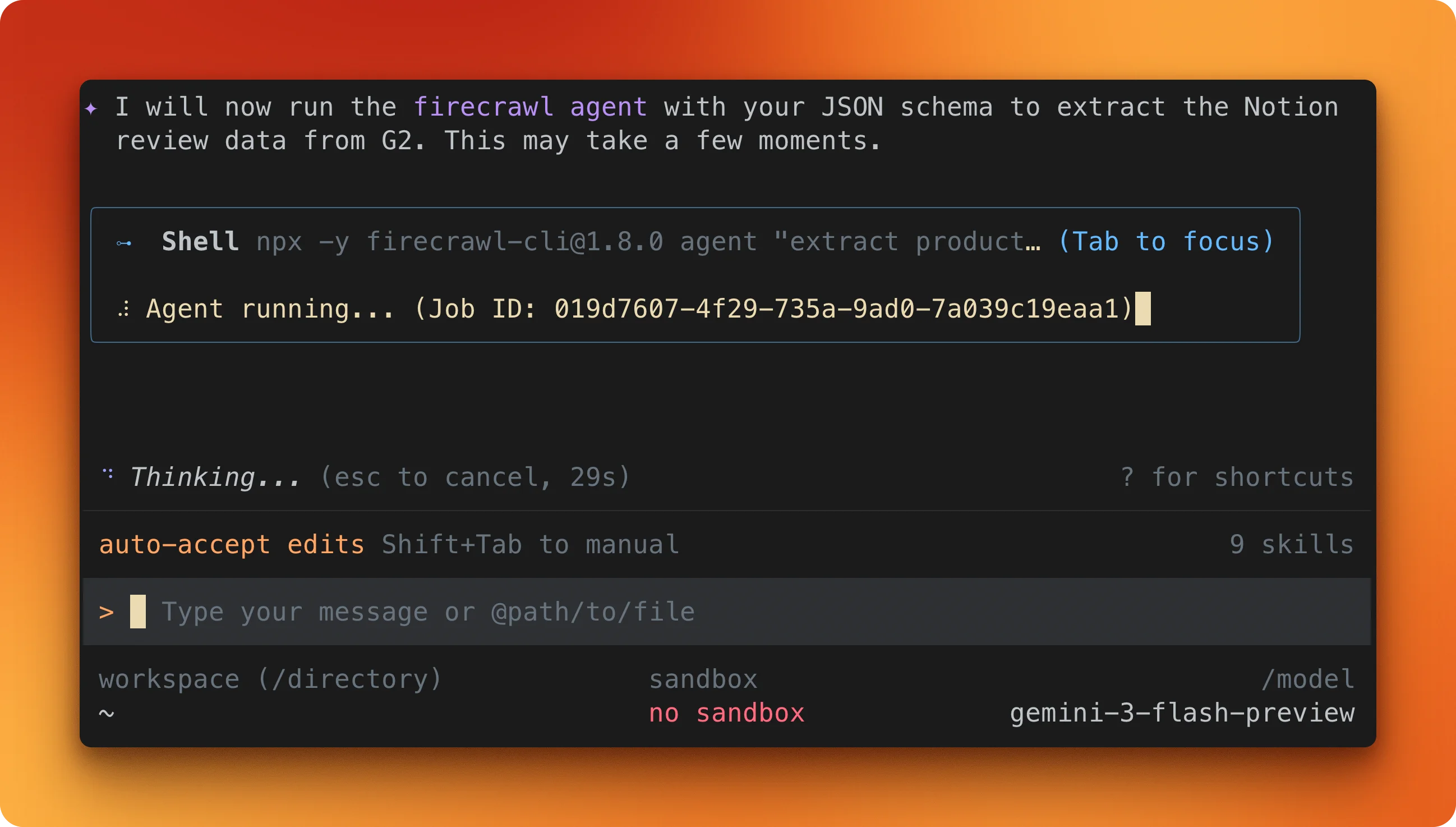

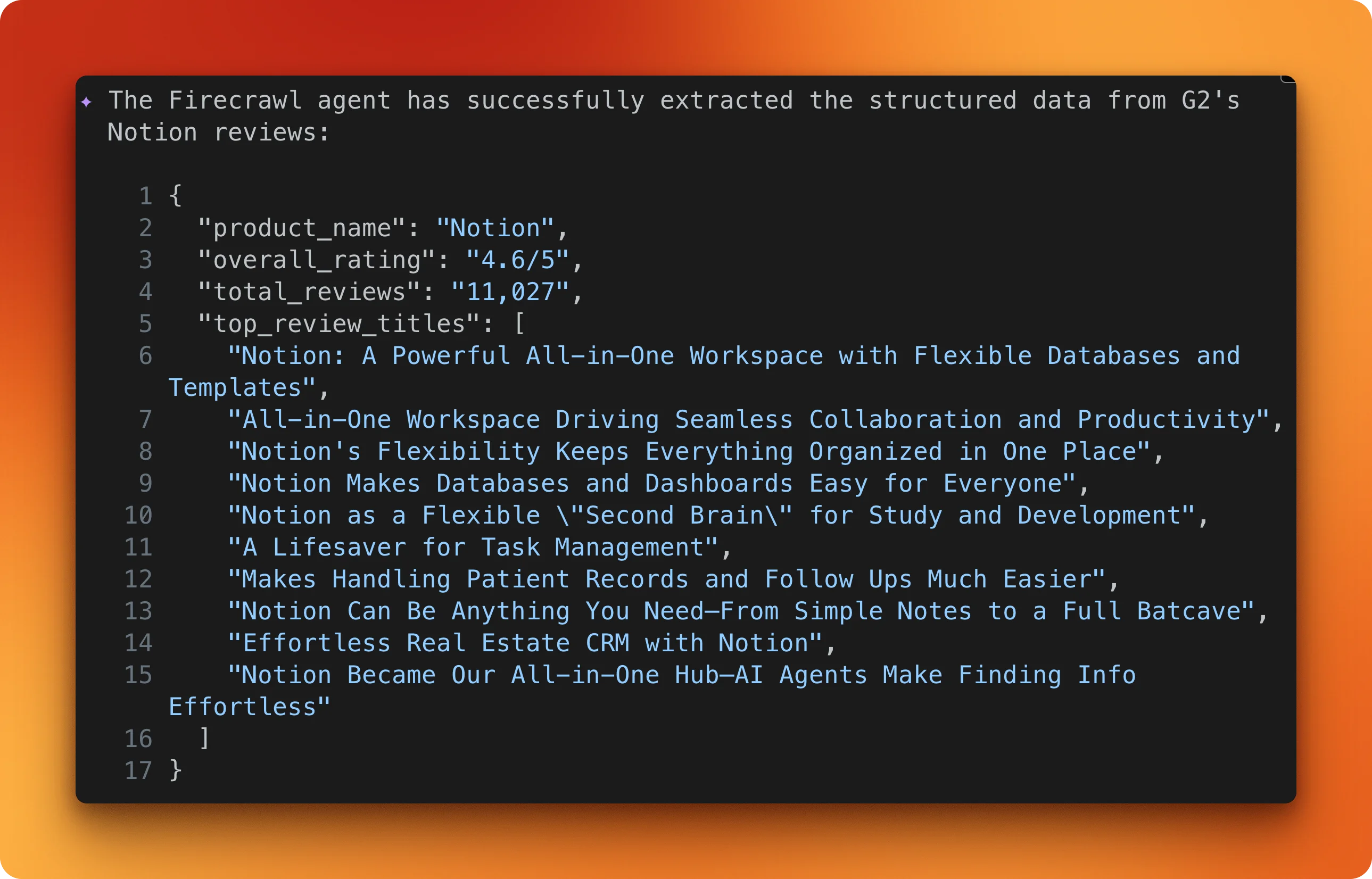

Structured extraction with a JSON schema

Markdown is useful, but sometimes you need the data in a specific shape.

Pass a JSON schema specifying product_name, overall_rating, total_reviews, and top_review_titles. Firecrawl's agent navigates to the same page and maps the content to your schema.

The result is typed JSON matching your schema, with no parsing logic on your end. You can ask Gemini to save it as a JSON file and iterate for hundreds of URLs if you have them in a list. The free tier gives you 1,000 credits per month to try this out.

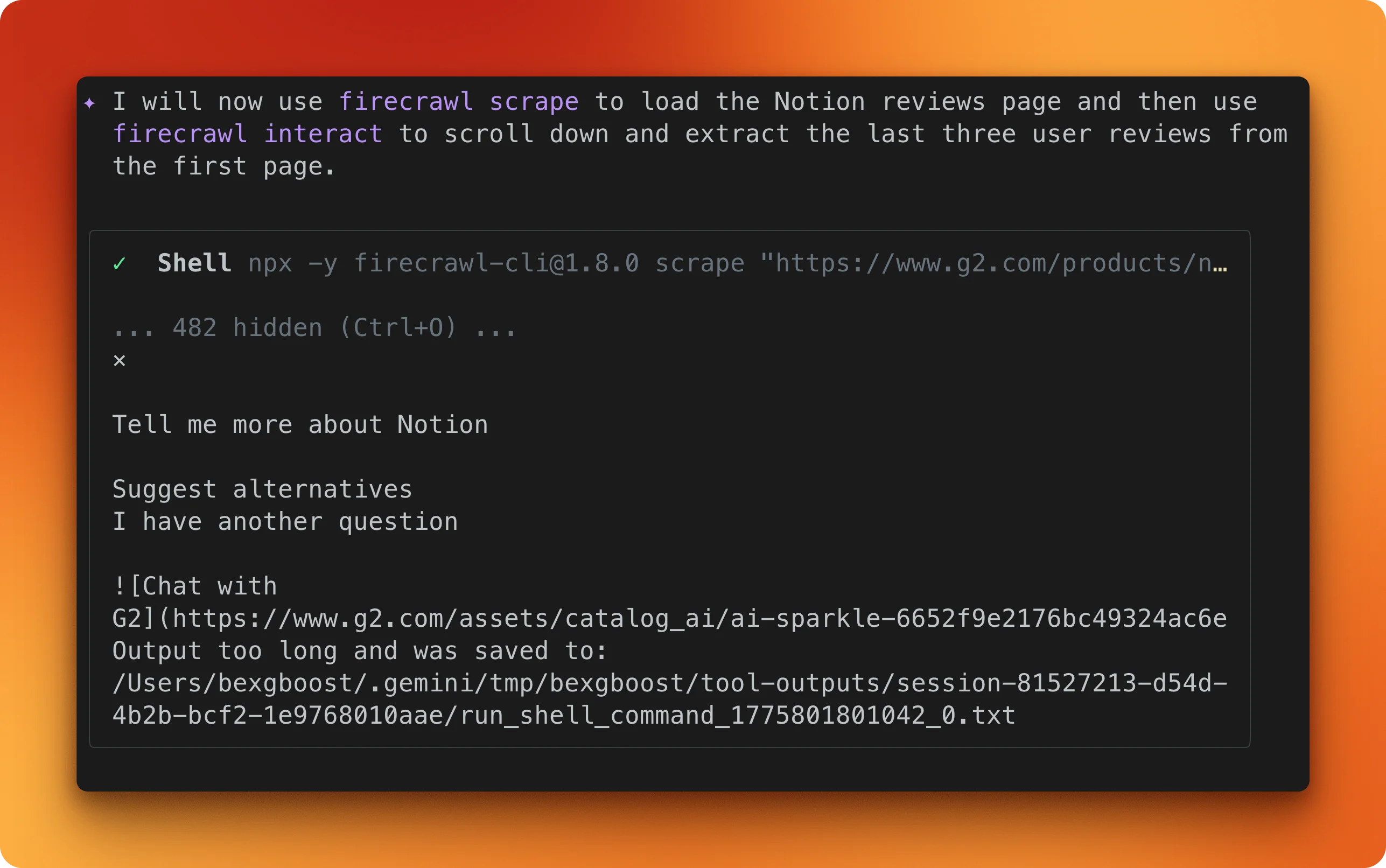

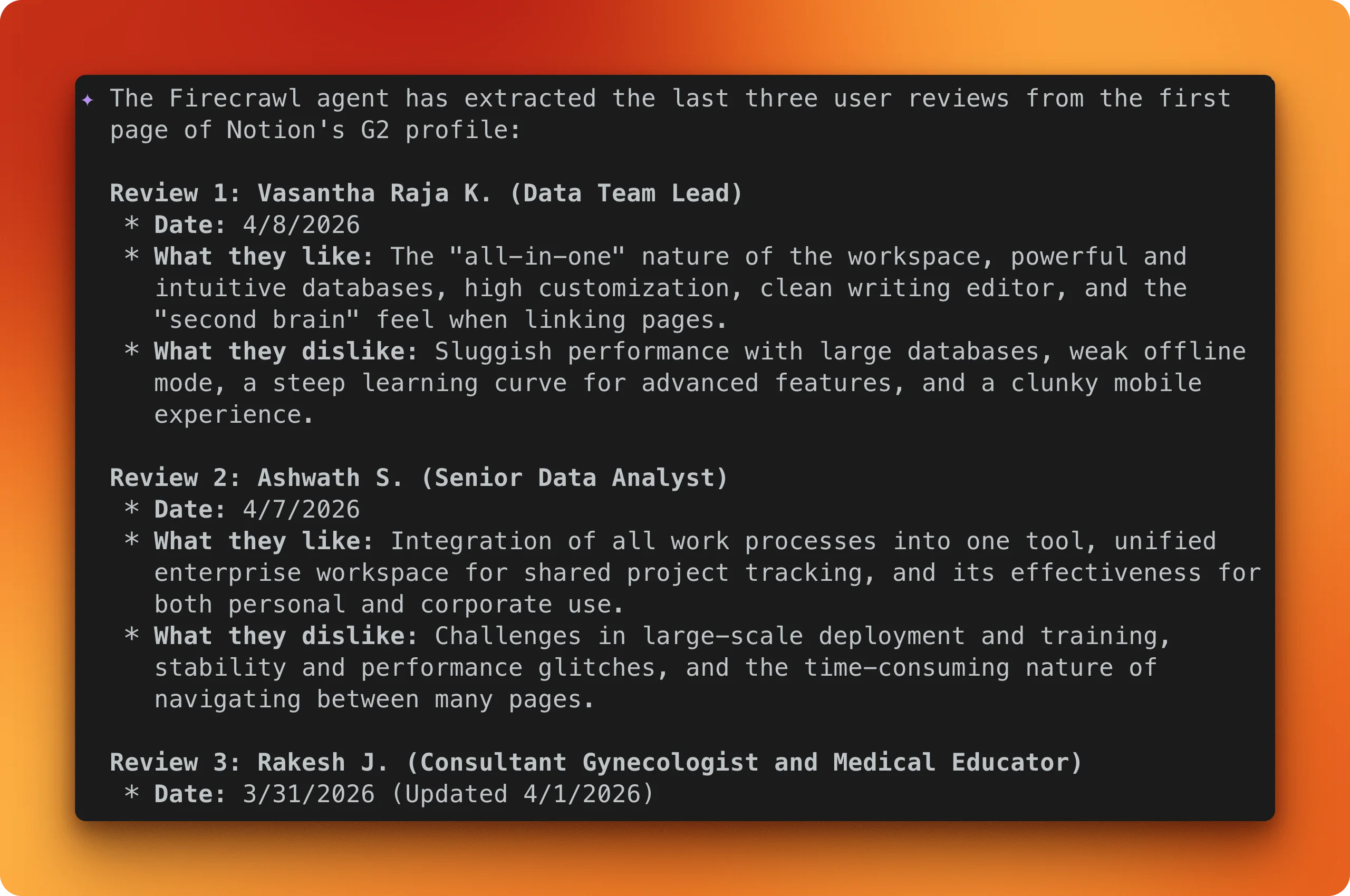

Browser interaction with /interact

Scraping and extraction both work on the page as it first loads.

For content that requires scrolling, clicking tabs, or navigating pagination, there's the /interact endpoint. It opens a browser session on Firecrawl's servers and controls it based on your prompt. The browser runs remotely, not on your machine.

In this example, the prompt tells Gemini CLI to load the same G2 page, scroll through the reviews section, and pull out the first three user reviews with names, dates, and what they liked and disliked:

Gemini CLI web fetch vs. Firecrawl

The examples above show what Firecrawl does, but they don't explain why the built-in tool fails in the first place.

Gemini CLI's fetch tool works through two paths.

The primary one sends your URL to Google's backend, which checks its cache and fetches the page if needed. When that path fails (which it does often on protected or dynamic pages), the tool falls back to a local HTTP request with a 10-second timeout. Neither path runs JavaScript, so anything built with React, Vue, or similar frameworks comes back as an empty HTML shell with no error message.

The local fallback has its own issues on top of that.

It silently cuts off responses at 100,000 characters, giving the model partial content with no way to know anything is missing. It also runs everything through a text converter regardless of content type, which garbles JSON data from APIs.

| Feature | web_fetch | Firecrawl |

|---|---|---|

| Basic page fetch | Google's backend or local HTTP with 10s timeout | Full browser rendering, responses in ~3-4 seconds |

| JavaScript rendering | None. JS-heavy pages return empty HTML | Full browser engine. Renders SPAs, waits for slow JS |

| Structured data extraction | None. JSON responses get corrupted | /agent with JSON schema or natural language prompt |

| Site crawling and mapping | Only fetches URLs you give it, max 20 | /crawl follows links up to 10K pages. /map discovers URLs for 1 credit |

| Screenshot capture | None | Full-page screenshots, URLs valid for 24 hours |

| Browser interaction | None | /interact controls a live browser with prompts or code |

| Batch operations | Up to 20 URLs per request | Parallel scraping with sync/async modes and webhook notifications |

The table comes down to one split: if the page is static, public, and unprotected, the built-in tool works. For everything else, you need something that runs a browser and handles protection layers. The same issue shows up in other coding agents too, as the Claude web fetch vs. Firecrawl comparison covers.

Prompt mode vs. code mode

The /interact endpoint from the examples above has two modes, and it's worth understanding the difference since they're priced differently.

Prompt mode accepts commands in plain English. You describe what you want and Firecrawl handles the clicks, scrolling, and typing. This costs 7 credits per minute and works well for one-off tasks.

from firecrawl import Firecrawl

app = Firecrawl(api_key="fc-YOUR-API-KEY")

result = app.scrape("https://www.amazon.com", formats=["markdown"])

scrape_id = result.metadata.scrape_id

app.interact(scrape_id, prompt="Search for iPhone 16 Pro Max")

response = app.interact(scrape_id, prompt="Click the first result and tell me the price")

print(response.output)

app.stop_interaction(scrape_id)Code mode gives you direct access to the Playwright API in Node.js, Python, or Bash. This costs 2 credits per minute and is better for repeatable workflows.

response = app.interact(

scrape_id,

code="""

await page.click('#load-more')

await page.wait_for_load_state('networkidle')

items = await page.query_selector_all('.item')

data = []

for item in items:

text = await item.text_content()

data.append(text.strip())

print(json.dumps(data))

""",

language="python",

)

print(response.stdout)Sessions stay open between calls, so you can chain multiple steps without re-navigating. Each session includes a live view URL for watching what the browser is doing in real time. They close after 10 minutes or 5 minutes of inactivity.

To be fair, the built-in fetch tool works fine for open, static pages. If you're grabbing content from a public docs page or a plain HTML site, it gets the job done with no API key and no extra setup.

Firecrawl is for the pages where that isn't enough. You can sign up here and start with the Agent Skills path in under a minute.

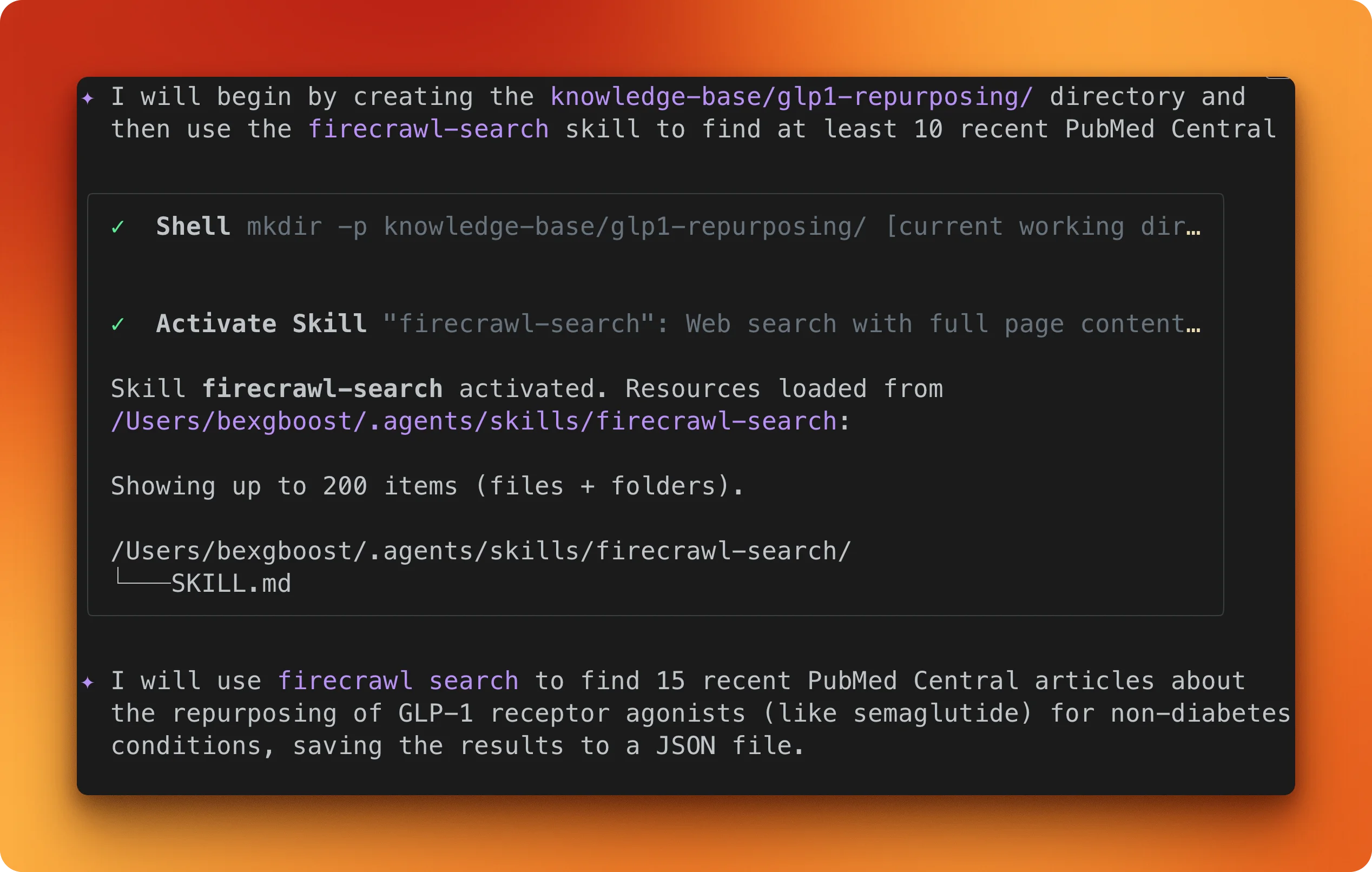

Collecting web data for RAG

So far, every example has been about one page at a time. This one collects 18 research papers and builds something you can query.

GLP-1 receptor agonists like semaglutide are being studied for Alzheimer's, addiction, and kidney disease on top of diabetes. New trial results show up on PubMed monthly. Most of them came out after Gemini's training cutoff, so if you ask about the latest Phase 3 data, the model either guesses or says it doesn't know.

The fix is straightforward: use Firecrawl to search PubMed Central, scrape the papers as markdown, and save them to a local directory. Gemini CLI can then read those files and answer questions from them.

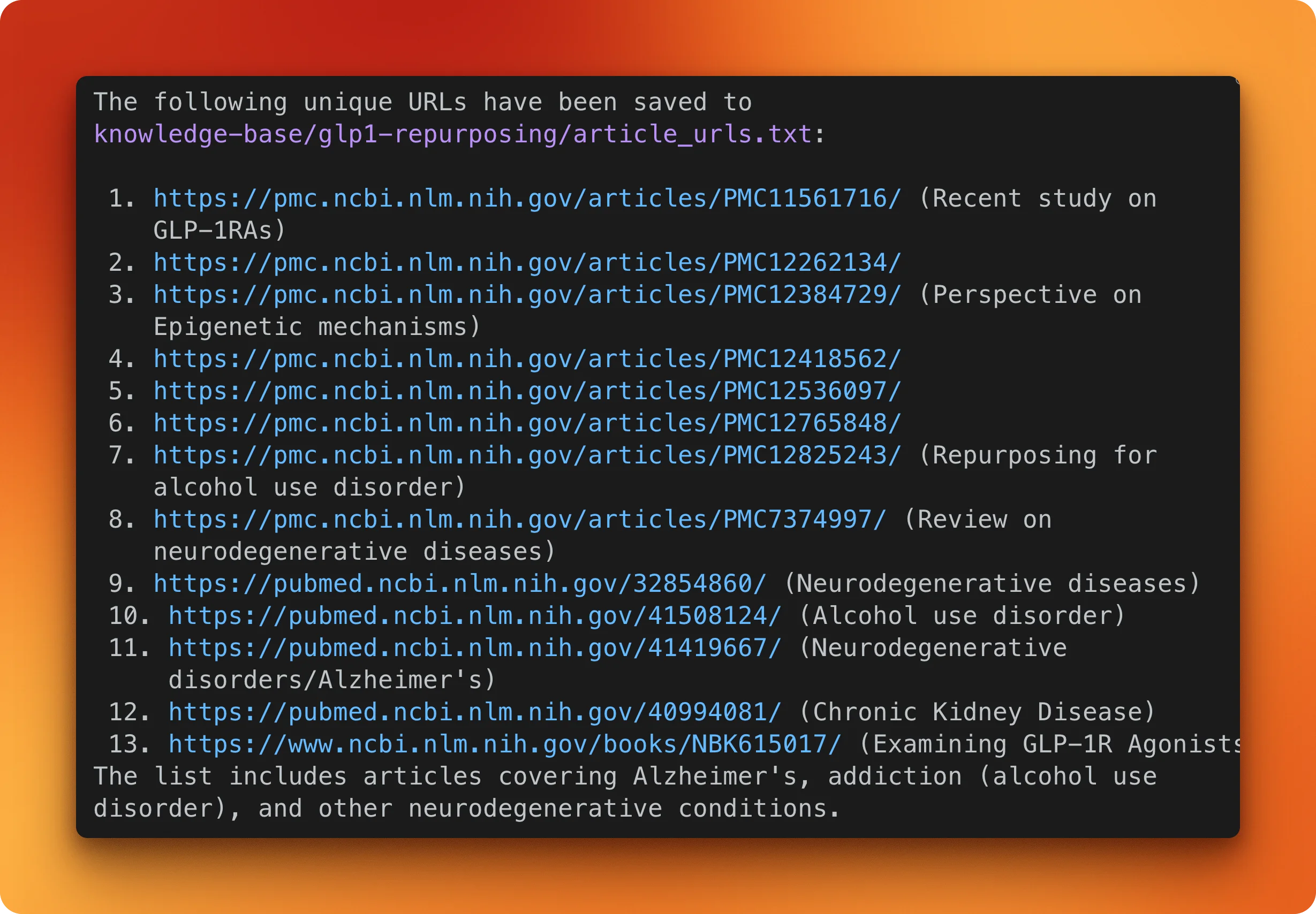

Create a directory called knowledge-base/glp1-repurposing/. Use Firecrawl to search

for recent PubMed Central articles about GLP-1 receptor agonists being studied for

non-diabetes conditions. Find at least 10 article URLs.Gemini CLI activates the firecrawl-search skill and runs the query.

It finds 13 unique PMC article URLs covering Alzheimer's, alcohol use disorder, neurodegenerative diseases, and chronic kidney disease.

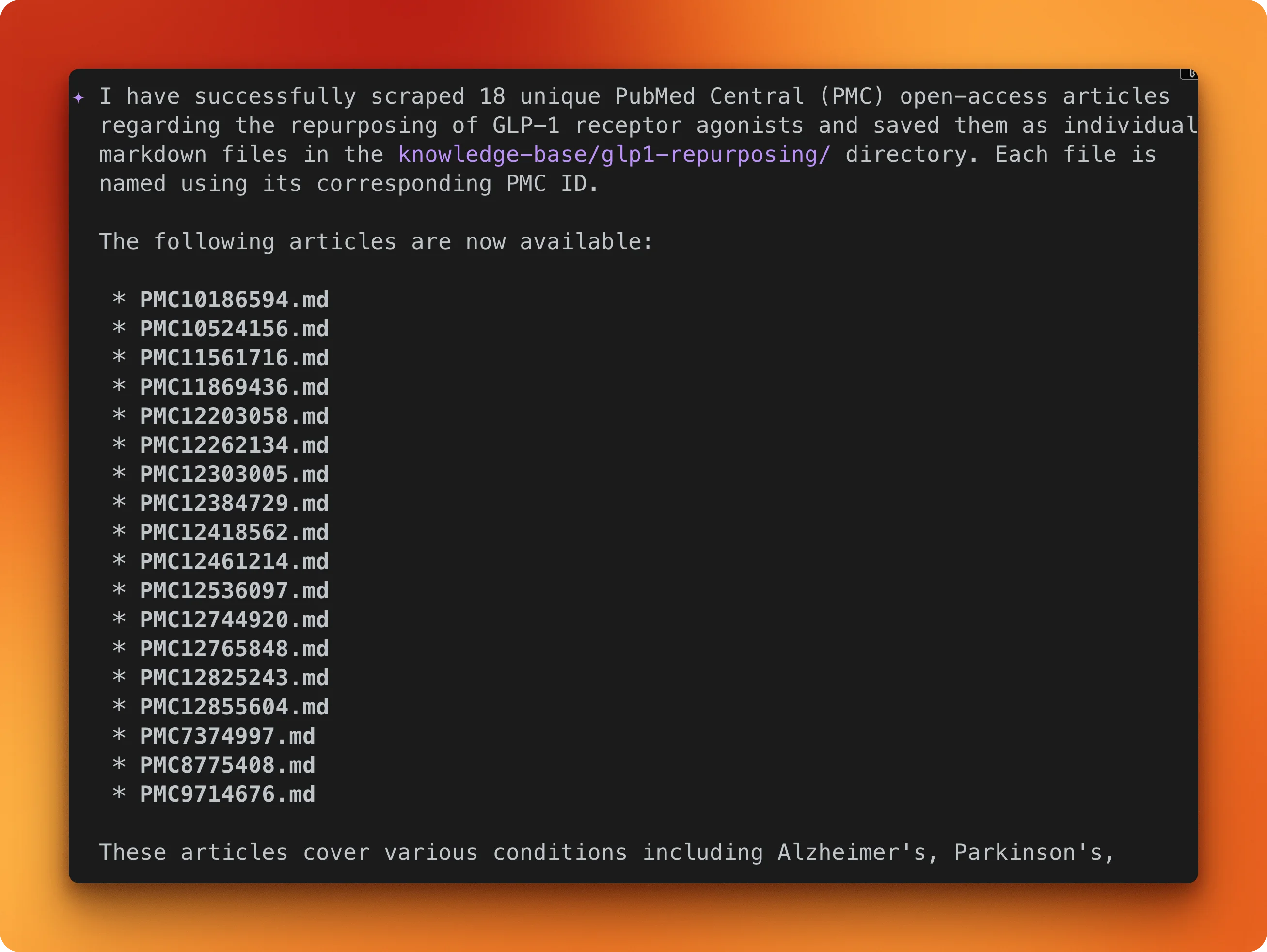

The next prompt scrapes all of them and saves each as a markdown file:

Scrape each article URL using Firecrawl and save them as markdown files in

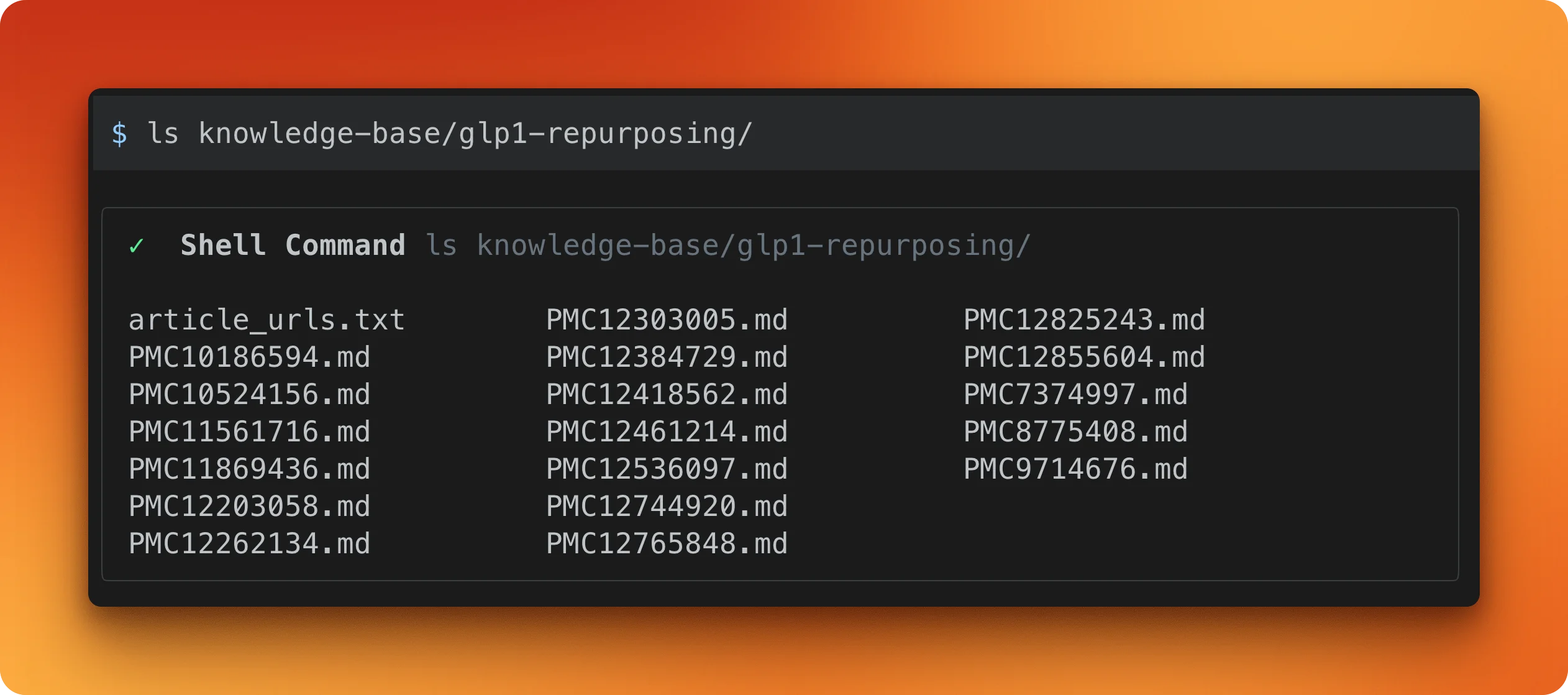

knowledge-base/glp1-repurposing/. Name each file by its PMC ID.Firecrawl ends up scraping 18 articles (some extra URLs got discovered along the way) and saves each one as a .md file.

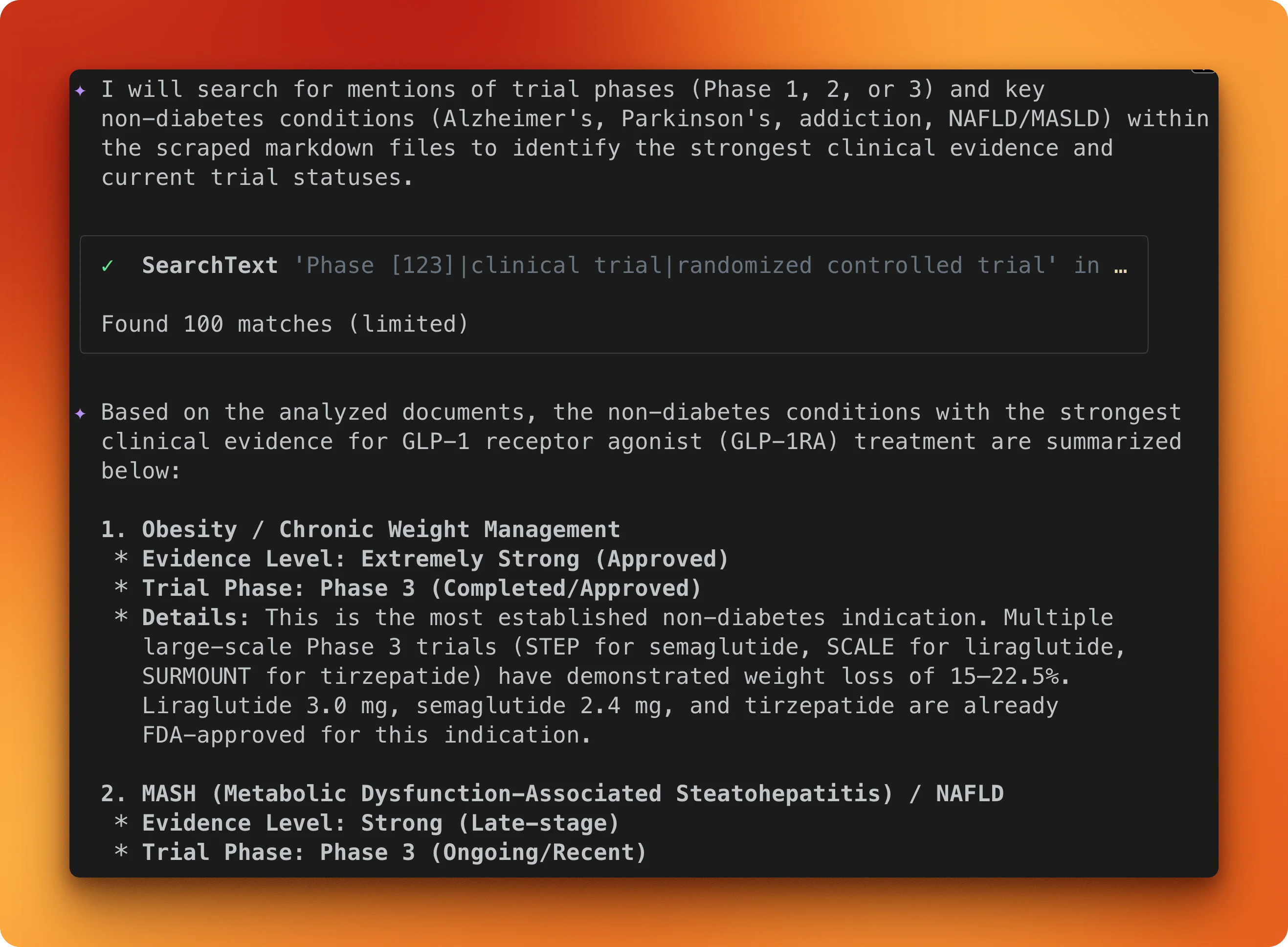

With the files saved, the last prompt queries across the whole collection:

Read all the markdown files in knowledge-base/glp1-repurposing/ and tell me which

non-diabetes conditions have the strongest clinical evidence for GLP-1 receptor

agonist treatment, and what trial phases are they in.Gemini CLI reads every file in the directory and synthesizes across all 18 papers.

The model identifies obesity and chronic weight management as having the strongest evidence (Phase 3, FDA-approved), with MASH/NAFLD close behind (Phase 3, ongoing).

None of this came from the model's training data. It came from the papers in that directory. For a deeper look at how scraping fits into retrieval pipelines, see the modern RAG tech stack breakdown.

Conclusion

Gemini CLI's built-in web tools work for static, public pages. For anything protected, dynamic, or structured, Firecrawl can handle it.

If you just want things working, Agent Skills is one install command. The MCP server gives you finer control over which tools are exposed.

Either way, the Firecrawl docs go deeper on each endpoint, and the Gemini CLI repo covers how to write your own agent skills. For teams wanting full control over the agent stack — custom models, self-hosted infrastructure, and domain-specific skill playbooks — the open-source firecrawl-agent scaffolds a complete web agent project in two commands.

Firecrawl gives AI agents and apps fast, reliable web context with strong search, scraping, and interaction tools. AI agents can fetch the Firecrawl onboarding skill to sign up your user, get an API key, and start building with Firecrawl.

Frequently Asked Questions

How much does Firecrawl cost?

Free tier gives 1,000 credits per month. Hobby plan is $16/month for 5,000 credits. Standard is $83/month for 100,000 credits. Base scrape costs 1 credit per page, with JSON extraction adding 4 credits and enhanced proxy adding 4 more.

Does Firecrawl work with other CLI AI tools besides Gemini CLI?

Yes. The Skills path works with Claude Code, Cursor, and OpenAI Codex. The MCP server path works with Claude Code, Cursor, Windsurf, and VS Code.

Can I self-host Firecrawl?

Yes, via Docker Compose with an API server, Playwright microservice, Redis, and PostgreSQL. The self-hosted version lacks advanced bypass and IP rotation.

What about data privacy?

Firecrawl offers a zero data retention option on enterprise plans at an additional 1 credit per page. Self-hosting gives full data control but without the advanced bypass.

How does Firecrawl compare to Crawl4AI or Jina Reader?

Crawl4AI is open-source and self-hostable but you manage all infrastructure. Jina Reader uses ML models for simple URL-to-markdown conversion but has no crawling or interaction. Firecrawl bundles scraping, crawling, mapping, extraction, and browser interaction as a managed API.

What are Gemini CLI web fetch's rate limits?

The tool allows max 10 requests per hostname per 60-second window. The free OAuth tier allows 1,000 requests per day and 60 per minute. Gemini 2.5 Pro free tier is limited to 100 requests per day and 5 per minute.

Which Firecrawl endpoints are most useful in a CLI context?

The /scrape endpoint with markdown format for single-page content at 1 credit. The /map endpoint for URL discovery at 1 credit. And /search for web search with optional page scraping at 2 credits per 10 results.

Is Gemini CLI's web fetch getting better?

A multi-URL fallback bug was fixed in March 2026. But urlContext API inconsistencies remain unresolved, and there are no plans for headless browser or JavaScript rendering in the built-in tool.

How does Firecrawl web search work?

Firecrawl's /search endpoint runs a web search and returns full-page markdown content for each result in a single API call. Unlike standard search APIs that return only titles and snippets, Firecrawl scrapes each result page through a real browser, so you get structured, LLM-ready content instead of shallow metadata. It costs 2 credits per 10 results and supports optional filtering by domain, date, and language.