TL;DR

- AI scraping replaces brittle CSS selectors with natural language — describe what you want, the tool figures out how to get it.

| Tool | Best for | Starting price | No-code |

|---|---|---|---|

| Firecrawl | Developers, AI apps, agents | Free (1,000 credits/month) | Yes |

| ScrapingBee | Headless browser scraping, anti-bot | $49/month | No |

| Import.io | Enterprise data pipelines | Custom | Yes |

| Browse.AI | Monitoring, non-technical users | Free (100 runs) | Yes |

| Kadoa | Price tracking, structured extraction | Free (500 pages) | No |

| Diffbot | Semantic extraction, Knowledge Graph | $299/month | No |

| Octoparse | Desktop + cloud, scheduled scraping | Free (limited) | Yes |

What is AI-powered web scraping?

AI-powered web scraping uses machine learning and large language models to extract web content semantically — you describe what data you want in plain English, and the AI figures out how to get it, even as site layouts change.

When I first started learning programming in 2020, web scraping meant spending hours in browser DevTools testing XPath queries, writing CSS selectors in BeautifulSoup, and debugging pagination logic. Those were the days.

Fast-forward to today, and that approach is mostly obsolete. AI-powered web scraping uses machine learning models and large language models to understand webpage content the way humans do. Instead of relying on brittle selectors that break whenever a site updates its layout, AI systems identify what you're looking for based on context and meaning — not element positions in the DOM.

These tools navigate complex sites, handle JavaScript-heavy pages, manage anti-bot infrastructure, and extract data from non-standard formats, all without requiring you to write a single selector.

Why should you use AI-powered web scraping in 2026?

- Selectors break constantly. Websites redesign, A/B test, and ship updates daily. Selector-based scrapers need constant maintenance. AI scrapers understand content semantically and adapt automatically.

- Most modern sites require JavaScript. SPAs, infinite scroll, lazy-loaded content — plain HTTP requests return empty shells. AI scraping tools handle JS rendering in the cloud with no browser setup on your end.

- AI agents need live web data. In 2026, a growing share of scraping feeds directly into LLM pipelines, RAG systems, and autonomous agents. Clean, structured web data is the raw material — and AI scrapers output exactly that.

- Interaction is now table stakes. Login walls, search forms, "Load More" buttons, and filter dropdowns gate most valuable data. Modern scraping APIs like Firecrawl's

/interactendpoint let you click, type, and navigate before extracting. - Natural language replaces code for non-developers. Describe what you need in plain English and the tool figures out how to get it — no XPath, no CSS selectors, no scraping framework to learn.

- Scale without infrastructure. Proxy rotation, rate limiting, concurrency, and retries are handled for you. One API call replaces weeks of scraping infrastructure work.

Leading AI web scraping solutions

Firecrawl: the best choice

Firecrawl is the web data API built specifically for AI. Where most scraping tools stop at fetching HTML, Firecrawl handles the full stack: JavaScript rendering, anti-bot measures, proxy rotation, structured extraction, autonomous web research, and now interactive browser sessions — all through a single API.

Core capabilities:

- Scrape any URL to clean, LLM-ready markdown. JavaScript-heavy sites, SPAs, and dynamically loaded content all work out of the box.

- Crawl entire websites with one API call. No URL queue management, no recursive logic, no maintenance — just pass a root URL and get back all pages.

- Agent endpoint (

/agent) — give it a research prompt and it autonomously browses multiple sources, searches, and returns structured results without you writing orchestration code. - Interact endpoint (

/interact) — scrape a page and stay in the session to click buttons, fill forms, handle logins, and extract data that only appears after an action.

The /interact endpoint unlocks a new class of scraping problems. Most valuable web data sits behind something: a search form, a "Load More" button, a login, a filter dropdown. Until now, that data required writing custom Playwright scripts and managing browser sessions yourself. With /interact, you scrape a page and immediately take actions using either natural language or code:

firecrawl interact "Search for iPhone 16 Pro Max"

firecrawl interact "Click the first result and return the price"Or with Playwright for precise control — the page object is pre-connected to the live session, no setup needed. Read the full /interact announcement for code examples and pricing.

Firecrawl is also the best solution when you want to start with search then scrape. It provides the complete web extraction toolkit: search, scrape, crawl, and interact — all through a single API. Firecrawl gives AI agents and apps fast, reliable web context with strong search, scraping, and interaction tools.

For those wanting to test Firecrawl without any setup, the Firecrawl playground lets you scrape any URL and experiment with extraction schemas directly in the browser — no API key or code required.

Firecrawl is also an ethical scraping solution. It respects robots.txt, rate limits requests to avoid overloading servers, and actively works with website owners rather than against them. A notable example: Firecrawl partnered with Wikipedia to provide structured, clean access to Wikipedia's content for AI applications — a collaboration built on transparency and mutual benefit rather than circumventing protections.

Pricing:

- Free: 1,000 credits/month, 2 concurrent requests

- Hobby: 5,000 credits/month, 5 concurrent requests

- Standard: 100,000 credits/month, 50 concurrent requests

- Growth: 500,000 credits/month, 100 concurrent requests

- Scale: $599/month for 1,000,000 credits, 150 concurrent requests, priority support

- Enterprise: Custom credits, custom pricing, unlimited pages, dedicated support & SLA, bulk discounts, zero-data retention, SSO

Firecrawl also has native integrations with popular no-code tools — including Lovable and n8n — so it can be used without writing any code at all.

Firecrawl is the right choice for developers building AI applications, RAG pipelines, autonomous agents, or any workflow that needs reliable, structured web data at scale. Get started for free at firecrawl.dev.

Other notable AI scraping tools

ScrapingBee

ScrapingBee has 368 upvotes on Product Hunt and markets itself as "the best web scraping API to avoid getting blocked."

Key features:

- Headless browser management using the latest Chrome version

- Proxy rotation with IP geolocation and automatic proxy rotation

- AI-powered data extraction without CSS selectors — describe what you need in plain English

- JavaScript rendering for single-page applications built with React, Angular, Vue, or any other library

- Response formats including HTML, JSON, markdown, and screenshots

- Google Search API for scraping search engine result pages

- 1,000 free API calls with no credit card required

In our view, ScrapingBee can work for a range of workflows, from traditional web scraping to AI-driven use cases. Markdown output is available, which helps streamline workflows for LLM-based applications.

Pricing:

- Freelance: $49/month for 250,000 API credits and 10 concurrent requests

- Startup: $99/month for 1,000,000 API credits and 50 concurrent requests

- Business: $249/month for 3,000,000 API credits and 100 concurrent requests

- Business+: $599/month for 8,000,000 API credits and 200 concurrent requests

May be well suited for e-commerce scraping, price monitoring, extracting reviews from sites with anti-bot measures, and AI-driven use cases that could benefit from markdown output.

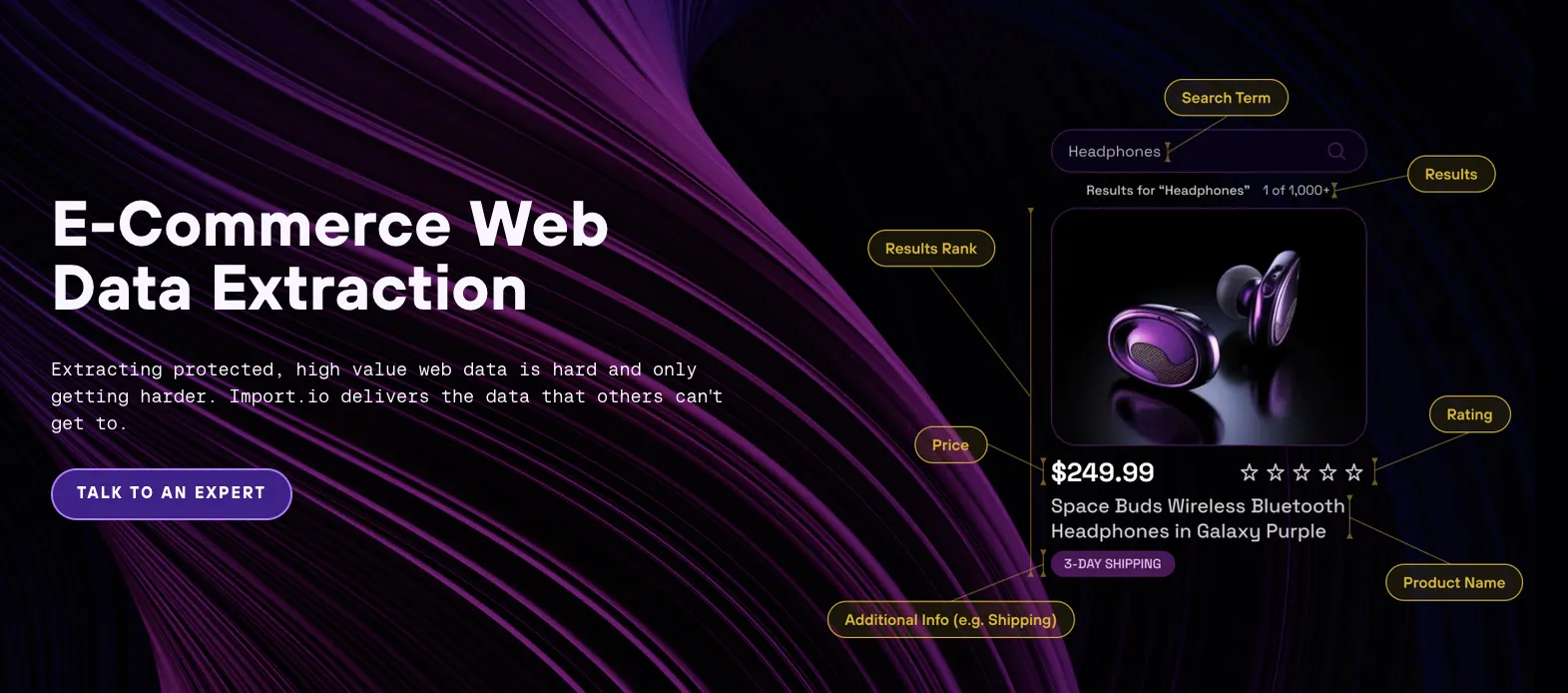

Import.io

Import.io has 306 upvotes on Product Hunt and positions itself as an enterprise-grade data extraction platform.

Key features:

- Visual workflow builder requiring minimal coding

- Scheduling and automation for regular data extraction

- Data transformation capabilities with normalization tools

- Enterprise-level support with dedicated account managers

- Integration with business intelligence platforms

What distinguishes Import.io is its focus on enterprise needs with robust governance features and data quality assurance. The platform emphasizes data transformation alongside extraction, making it suitable for organizations needing production-grade, reliable data pipelines.

Pricing:

- Custom enterprise pricing based on volume, features, and support requirements

- Plans typically start in the mid-hundreds per month

- Enterprise plans include professional services and custom SLAs

Most effective for competitive intelligence, market research, and large-scale data operations requiring reliability and consistency.

Browse.AI

Browse.AI has accumulated 913 upvotes on Product Hunt, the highest among these tools, with its no-code approach to web scraping.

Key features:

- Robot trainer with point-and-click interface for creating scrapers

- Change detection and automated monitoring capabilities

- Comprehensive error reporting and debugging tools

- Direct integration with business tools (Zapier, Airtable, Google Sheets, Make.com)

- Data cleaning with formatting options

Browse.AI stands out by making web scraping accessible to non-technical users through its intuitive robot trainer. The change detection system allows for monitoring updates to specific data points across websites without requiring continuous full scrapes. For teams wanting to go further with web scraping automation and scheduled pipelines, there are code-based options that offer more control.

Pricing:

- Free: 50 credits/month, 2 websites, unlimited robots

- Personal: $48/month (or $19/month billed annually) for 2,000 credits/month

- Professional: $87/month (or $69/month billed annually) for 5,000 credits/month

- Premium: Starting at $500/month (billed annually), fully managed, 600,000+ credits/year

Particularly valuable for competitive monitoring, lead generation, and content aggregation tasks requiring regular updates.

Kadoa

Kadoa has 169 upvotes on Product Hunt and focuses on simplifying web scraping with AI assistance.

Key features:

- AI-powered CSS selector generation

- Developer-friendly Python SDK

- Automated data cleaning and post-processing

- Robust error handling with retry mechanisms

- Scheduled extraction with monitoring

What differentiates Kadoa is its combination of AI simplicity with developer flexibility. The platform uses AI to generate selectors but still provides programmable interfaces for developers who need customization options.

Pricing:

- Flex: Free trial, consumption-based pricing, includes all core features and basic integrations

- Enterprise: Custom usage limits, volume discounts, all integrations (Snowflake, S3, MCP), SSO, dedicated account manager

Works best for price tracking, real estate data aggregation, and research projects requiring structured data extraction.

Diffbot

Diffbot has 384 upvotes on Product Hunt and specializes in AI-powered structured data extraction.

Key features:

- Knowledge Graph with over 20 billion entities and facts

- Advanced natural language processing for content understanding

- Visual learning algorithms for layout recognition

- Multiple specialized APIs (Article, Product, Image, etc.)

- Automatic content classification

What makes Diffbot unique is its sophisticated AI that understands web content semantically rather than relying on selectors. The platform's Knowledge Graph provides additional context to extracted data, enriching it with related information. If you need to build knowledge graphs from web data in your own pipeline, Firecrawl pairs well with graph construction frameworks for the same outcome.

Pricing:

- Free: $0/month for 10,000 credits, no credit card required

- Startup: $299/month for 250,000 credits

- Plus: $899/month for 1,000,000 credits

- Enterprise: Custom credit allotment and pricing

Particularly effective for news aggregation, product intelligence, and research applications requiring semantic understanding of content.

Octoparse

Octoparse has 25 upvotes on Product Hunt and offers both desktop and cloud-based scraping solutions.

Key features:

- Intuitive point-and-click interface with visual workflow

- Pre-built templates for common scraping scenarios

- Cloud execution for scheduled and large-scale tasks

- Multiple export formats (Excel, CSV, API, database)

- Advanced pagination handling

What distinguishes Octoparse is its hybrid approach offering both desktop software and cloud capabilities. This provides flexibility for users with different needs, from one-off projects to regular scheduled extractions. For teams that need more API-driven or AI-ready alternatives, see the best Octoparse alternatives.

Pricing:

- Free: Basic features, local extraction only, up to 10 tasks

- Standard: $69/month (billed annually) or $83/month, 3 concurrent cloud processes, task scheduling, IP rotation

- Professional: $249/month (billed quarterly) or $299/month, 20 concurrent cloud processes, advanced API, cloud storage export

- Enterprise: Custom pricing, 40+ concurrent cloud processes, team collaboration, dedicated success manager

Best suited for data mining, competitor analysis, and financial data extraction with regular scheduling needs.

Conclusion: choosing the right AI scraper

The right tool depends on what you're actually trying to do.

If you're building AI applications, RAG pipelines, or autonomous agents that need clean, structured web data, Firecrawl is the clearest choice — it's built for exactly this use case, with a free tier to get started and endpoints that handle everything from simple scrapes to full autonomous research via /agent.

If you're a non-technical user who needs to monitor competitor pages or track changes on specific websites, Browse.AI's point-and-click interface gets you there without writing a single line of code.

If your team needs semantic content understanding at scale — particularly for news, research, or product intelligence — Diffbot's Knowledge Graph and NLP-based extraction are hard to match.

For enterprise data pipelines with strict governance requirements, Import.io and Octoparse both offer the scheduling, reliability, and support tiers that production teams need.

And if you need structured data from specific domains like finance or investment research, Kadoa's consumption-based model lets you start without committing to a fixed monthly plan.

The short version: match the tool to your use case, not the other way around. Budget, technical skill level, and the kind of sites you're targeting should all factor into the decision. But if you need one recommendation that covers the widest range of use cases in 2026, Firecrawl is where to start.

Frequently Asked Questions

What is AI-powered web scraping?

AI-powered web scraping uses machine learning models and large language models to understand and extract web content semantically — without relying on fragile CSS selectors or XPath. Instead of targeting specific DOM elements, you describe what data you want in plain English and the AI figures out how to get it, even as website layouts change.

What is the best AI web scraping tool in 2026?

Firecrawl is the top choice for developers and AI teams. It handles JavaScript rendering, full-site crawling, autonomous research via the /agent endpoint, and interactive browser sessions via /interact — all through a single API with a free tier.

What is Firecrawl's /interact endpoint?

The /interact endpoint lets you scrape a page and stay in that browser session to take actions: clicking buttons, filling forms, handling logins, and extracting data that only appears after an interaction. You can use natural language prompts or Playwright code to control the session.

What is Firecrawl's /agent endpoint?

The /agent endpoint is an autonomous research API. You give it a prompt and an output schema, and it independently browses the web, searches multiple sources, and returns structured results — all from a single API call, with no orchestration code required.

Do I need to know how to code to use AI web scraping tools?

Not necessarily. Tools like Browse.AI offer a point-and-click interface with no coding required. Firecrawl also has a playground at firecrawl.dev/playground where you can scrape and experiment with schemas directly in the browser without writing any code.

How does AI web scraping handle JavaScript-rendered websites?

AI scraping tools run a headless browser in the cloud that executes JavaScript before returning the fully rendered page content. This means SPAs, infinite scroll pages, and dynamically loaded content all work out of the box — unlike plain HTTP requests which return empty HTML shells.

How much does AI web scraping cost?

Most tools offer a free tier. Firecrawl gives you 1,000 free credits per month (one scrape per credit) with no credit card required. Paid plans start from around $16–$49/month depending on the tool and volume. Enterprise pricing is custom for high-volume use cases.

What is the difference between web scraping and web crawling?

Web scraping extracts specific data from known pages. Web crawling discovers and visits multiple pages across a site by following links. Large projects typically combine both: crawling to find all relevant pages, then scraping to extract data from each one. Firecrawl handles both in a single API.