ScraperAPI is a well-known choice for teams that need to extract data at scale without managing proxy infrastructure. In our review, this guide explores five alternatives that offer different approaches to scraping, pricing, and data output, covering AI-native APIs, enterprise proxy platforms, and everything in between. For a broader look at the full landscape, see our web scraping API comparison.

TL;DR: Quick comparison

| Alternative | Best For | Starting Price | Key Advantage |

|---|---|---|---|

| Firecrawl | AI apps, LLM pipelines, developers | $0 (1,000 credits/month free) | LLM-ready markdown, full-site crawling, open-source |

| Zyte | Enterprise e-commerce and managed data delivery | Pay-as-you-go | 15 years of scraping expertise, managed data service |

| Apify | Broad automation across many sites | $0 (free tier) | 20,000+ ready-made actors, full browser automation |

| Bright Data | Enterprise scale, complex sites, large proxy network | Custom | 150M+ IPs, Web Unlocker, AI Scraper Studio |

| ScrapingBee | Developer-friendly API with fast SERP access | $49/month | Sub-second SERP data, AI extraction, simple pricing |

What is ScraperAPI: Quick overview

ScraperAPI is a web scraping service that handles proxy rotation, browser rendering, and request infrastructure automatically, so developers can extract data from any public website with a single API call.

Its product lineup spans six core tools:

| Product | What it does |

|---|---|

| Scraping API | Flagship product: a GET request API handling proxies, rendering, and request infrastructure |

| Structured Data Endpoints | Pre-built scrapers for Amazon, Google, Walmart; returns clean JSON, no parsing needed |

| Async Scraper | Submit millions of jobs at once, processed in the background |

| DataPipeline | Visual no-code tool for recurring scraping workflows |

| Large-Scale Data Acquisition | Enterprise-grade offering for massive request volumes |

| LangChain Integration | Gives LangChain agents real-time web access with geo-targeted scraping and clean output |

Think of ScraperAPI as a layered stack: the raw Scraping API sits at the bottom for developers who want control, Structured Data Endpoints sit above for teams who want pre-parsed data, and DataPipeline sits on top for automation without code.

Pricing overview:

| Plan | Monthly Price | Requests Included |

|---|---|---|

| Free | $0 | 5,000 API calls |

| Hobby | $49 | 100,000 |

| Startup | $149 | 1,000,000 |

| Business | $299 | 3,000,000 |

| Scaling | $475 | 5,000,000 |

| Enterprise | Custom | 5,000,000+ |

Note: complex sites consume more credits per request. E-commerce sites use 5 credits per request; search engines use up to 25.

Why users look for ScraperAPI alternatives

1. Pricing grows unpredictably at scale

ScraperAPI's credit model works well for simple scraping, but costs multiply quickly when scraping complex sites. E-commerce sites consume 5 credits per request and search engines up to 25 credits, making it difficult to forecast monthly spend.

John S., Small Business Founder, noted on G2:

Credit costs can add up significantly, especially if using the premium parameters, which can make it difficult to budget usage vs. cost accordingly.

Muhammed H., Engineer, shared a similar experience on G2:

While the service is reliable, the cost may quickly add up when scraping large amounts of data frequently. Additionally, occasional rate-limiting issues or request failures can be frustrating, especially during high-demand periods.

2. Limited support and visibility

When requests fail, getting guidance can be slow. The platform's black-box nature makes it difficult to debug what is happening behind the scenes or optimize for a specific target.

Tahmeem S. noted on G2:

The support is not that great, expected support to be more guided.

Deni H. echoed this on G2:

The support provided by services is often lacking and can be slow or unhelpful. The fact that it's a black-box solution and provides little visibility into what's going on behind the scenes could be another drawback.

Top 5 ScraperAPI alternatives

1. Firecrawl - AI-native, open-source web data API

Firecrawl takes a fundamentally different approach from ScraperAPI. While ScraperAPI focuses on data collection at scale with dedicated site-specific scrapers and proxy rotation, Firecrawl is purpose-built for AI and LLM applications that need clean, structured, machine-readable output.

The result is a tool that does more than scrape: it crawls entire domains, maps site structure, extracts structured data with natural language prompts, and can autonomously browse the web on your behalf.

How Firecrawl differs from ScraperAPI

| Feature | ScraperAPI | Firecrawl |

|---|---|---|

| Wins on | Structured endpoints for Amazon, Google, Walmart; 40M+ proxy network; async at massive scale | LLM-ready output, full-site crawling, open-source, autonomous AI agent |

| Built for | Data collection at scale | AI and LLM applications |

| Output | Raw HTML or clean JSON (via SDEs) | Clean markdown, HTML, structured JSON |

| Screenshots | Not offered | Full-page screenshots via API |

| Full site crawling | Async scraper handles bulk jobs, not native crawl | Native first-class feature |

| Site mapping | Not offered | Map all URLs on a domain |

| AI Agent mode | No | Autonomous agent with a single plain English prompt |

| Interactive actions | Not offered | Click, scroll, type, wait before scraping |

| LangChain / MCP | Dedicated LangChain solution | Native MCP server and CLI skill |

| Media parsing (PDF/DOCX) | Not mentioned | Yes |

| Open Source | No | Yes (87k+ GitHub stars, YC-backed) |

| Pricing model | 1-25 credits per request (by site complexity) | 1 credit per successful page, always |

| Starting price | $49/month | $0 (free tier), $16/month paid |

For AI developers: LLM-ready output from day one

ScraperAPI returns raw HTML or structured JSON depending on the endpoint. For AI applications, you still need to parse, clean, and format that output before it is usable in a prompt or pipeline.

Firecrawl skips that step. Every scrape outputs clean markdown natively, reducing LLM token consumption and eliminating post-processing:

from firecrawl import Firecrawl

firecrawl = Firecrawl(api_key="fc-YOUR-API-KEY")

# Scrape a website:

doc = firecrawl.scrape("https://firecrawl.dev", formats=["markdown", "html"])

print(doc)No CSS selectors. No parsing. Just data.

Full-site crawling and site mapping

ScraperAPI's Async Scraper can process millions of URLs in bulk, but it does not natively crawl a domain and discover its URL structure. Firecrawl's /crawl and /map endpoints do:

/crawl: Recursively scrape an entire domain, following links automatically/map: Return all URLs on a domain in seconds, useful for planning what to scrape/search: Search the web and return clean, structured results

This matters for teams building RAG systems, documentation chatbots, or AI agents that need to understand the full structure of a site before scraping it. For a deep dive, see our guide to web crawling with Firecrawl.

Autonomous AI agent

Firecrawl's /agent endpoint lets you give the API a plain English goal and let it browse autonomously. No need to define which URLs to scrape or which selectors to use:

result = app.agent(

prompt='Find the pricing page on example.com and extract all plan names and prices as JSON',

)ScraperAPI has a LangChain integration for AI workflows, but does not offer an equivalent autonomous browsing agent.

Browser sandbox for complex interactions

For sites that require authentication, multi-step flows, or complex user interactions before data appears, Firecrawl's browser sandbox gives you a full programmable browser environment exposed via API. Script real browser interactions, fill forms, capture screenshots, and extract content from authenticated pages, without managing headless browser infrastructure yourself. ScraperAPI does not offer an equivalent.

Open-source and self-hostable

ScraperAPI is a closed proprietary service. Firecrawl is fully open-source with 87k+ GitHub stars, backed by Y Combinator. You can self-host on your own infrastructure, audit the codebase, or contribute directly to the project.

Transparent pricing

| Plan | Monthly Cost | Credits Included |

|---|---|---|

| Free | $0 | 1,000 credits |

| Hobby | $16 | 5,000 credits |

| Standard | $83 | 100,000 credits |

| Growth | $333 | 500,000 credits |

| Scale | $599 | 1,000,000 credits |

Pricing is always 1 credit per successful page regardless of site complexity. Failed requests do not consume credits.

When to choose Firecrawl over ScraperAPI

Choose Firecrawl if you're:

- Building AI apps, RAG systems, or LLM pipelines that need clean structured data

- Crawling or mapping entire domains, not just individual pages

- Looking for affordable, transparent 1-credit-per-page pricing with no complexity multipliers

- Using LangChain, LlamaIndex, or other AI frameworks (Firecrawl has native MCP support)

- Want open-source, self-hostable infrastructure

- Need an autonomous agent that can browse the web with a plain English prompt

2. Zyte - Enterprise web scraping with 15 years of expertise

Zyte is one of the most established names in web scraping, built on 15 years of experience and the open-source Scrapy framework. Their platform combines a full-stack web scraping API with a managed data delivery service for teams that want data without running the scraping infrastructure themselves.

How Zyte differs from ScraperAPI

| Feature | ScraperAPI | Zyte |

|---|---|---|

| Wins on | Simpler entry-level API, lower starting price, 5K free calls | 15 years of scraping expertise, managed data service, Scrapy lineage |

| Core API | Single GET request API | AI-powered Web Scraping API |

| Managed Data | Not offered | Full managed delivery: Zyte's team scrapes on your behalf |

| Structured endpoints | 20+ site-specific SDEs | Product, news, flight, jobs, business data verticals |

| AI extraction | Not offered | AI-powered data extraction built into the API |

| Geotargeting | 40M+ proxies, 50+ countries | Global coverage |

| Pricing model | Per-request credit model | Pay-per-URL, tiered by monthly spend |

| Free trial | 5,000 free API calls | 14-day free trial |

Web Scraping API with AI-powered unblocking

Zyte's Web Scraping API handles proxy rotation, JavaScript rendering, and access management automatically. Their system has been refined over 15 years of scraping across thousands of sites. For teams scraping complex sites, this depth of experience can be an advantage over ScraperAPI's more standardized approach.

Managed Data: let Zyte's team handle it

Zyte's standout offering for enterprise teams is Managed Data. You define what data you need, and Zyte's team, combining their platform with human expertise, delivers structured feeds to you. No setup fees, no infrastructure management, no compliance headaches.

ScraperAPI's DataPipeline lets you automate scraping jobs yourself. Zyte's Managed Data goes a step further: someone else builds and maintains the pipeline.

Data verticals

Zyte offers pre-built data products for specific industries:

- Product and pricing data (e-commerce)

- News and article data (media monitoring)

- Flight and travel data

- Business places data (lead generation)

- Job postings, real estate, social media

- AI and LLM training data

This competes with ScraperAPI's Structured Data Endpoints, but covers a broader range of industries and is backed by a managed delivery team.

Key features

- Web Scraping API with automatic proxy management and rendering

- Managed Data delivery service (Zyte's team scrapes for you)

- AI-powered extraction for unstructured web content

- Scrapy integration (Zyte built and maintains the Scrapy framework)

- Vertical data products for e-commerce, news, flights, jobs, and real estate

Pricing

Zyte uses pay-per-URL pricing that scales with monthly spend. HTTP response body scraping starts at $0.13 per URL (pay-as-you-go) and drops to $0.06 with a $500/month commitment. Browser-rendered pages start at $1.01 per URL. Enterprise pricing is available. A 14-day free trial is offered.

When to choose Zyte over ScraperAPI

Choose Zyte if you:

- Need a managed service where someone else handles the scraping end-to-end

- Are scraping heavily protected enterprise sites at scale

- Want vertical-specific data products for e-commerce, news, or flights

- Prefer Scrapy-native infrastructure

- Need per-URL pricing with volume discounts rather than a credit multiplier model

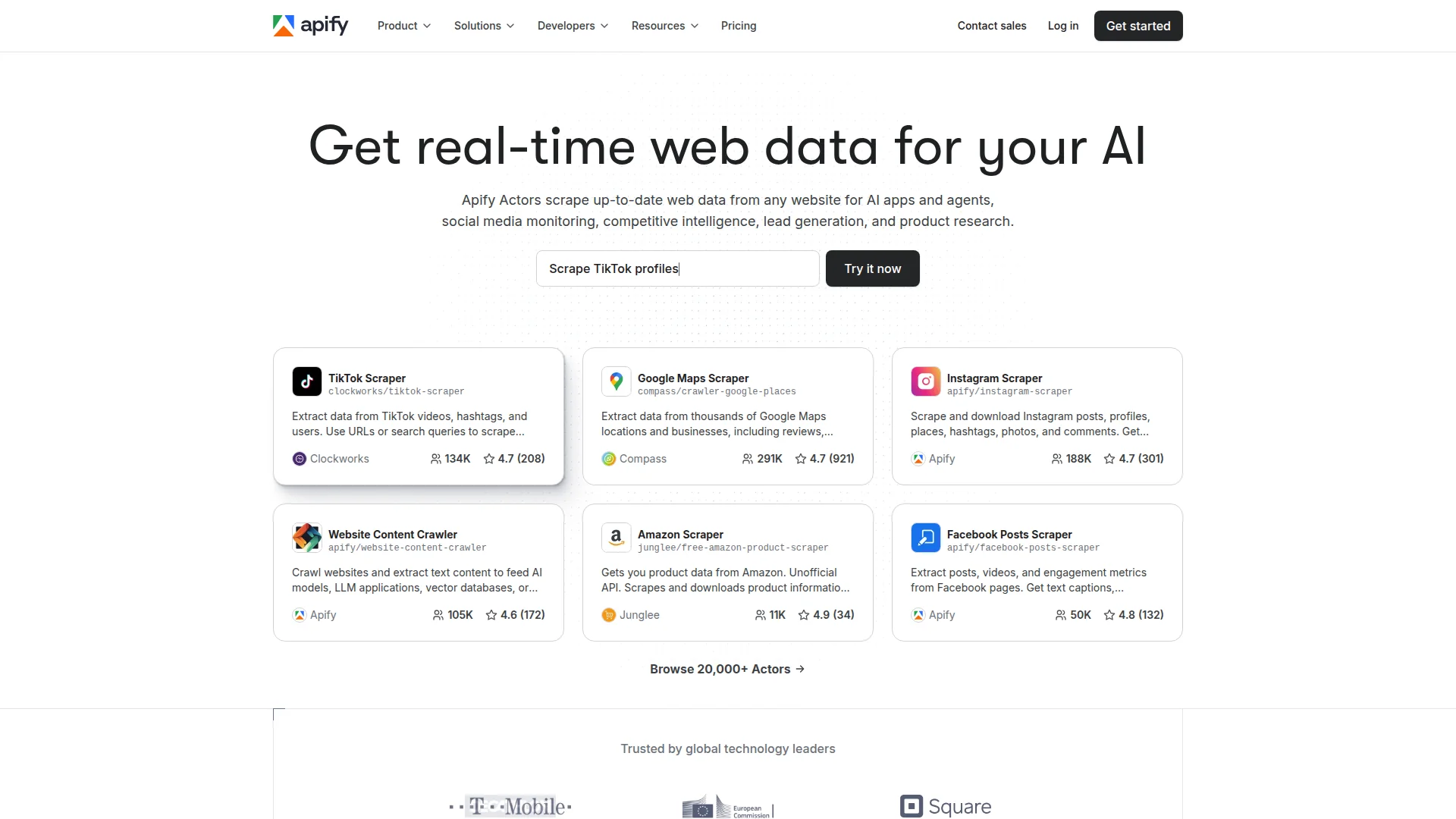

3. Apify - Full-stack automation with a 20,000+ actor marketplace

Apify is a cloud-based web scraping and automation platform with the largest marketplace of pre-built scrapers ("Actors") in the industry. With 20,000+ Actors covering everything from social media to e-commerce, Apify covers far more ground than ScraperAPI's 20+ Structured Data Endpoints.

For a deeper feature-by-feature breakdown, see Firecrawl vs. Apify.

How Apify differs from ScraperAPI

| Feature | ScraperAPI | Apify |

|---|---|---|

| Wins on | Simpler per-request API, geotargeting, dedicated SDEs for top sites | 20,000+ actors, full browser automation, AI agents, broader site coverage |

| Marketplace | 20+ Structured Data Endpoints | 20,000+ community and official Actors |

| Browser automation | Headless rendering only | Full Playwright and Puppeteer support |

| AI agent capabilities | LangChain integration | Dedicated AI agent infrastructure |

| Open-source SDK | No | Crawlee (open-source Python and JS) |

| Scheduling | DataPipeline | Native scheduling for any Actor |

| Pricing | Per-request credit model (1-25x per complexity) | Per-compute-unit model |

| Starting price | $49/month | $0 (free tier) |

| Support | Limited support noted in reviews | Chat and priority support on paid plans |

20,000+ ready-made Actors

ScraperAPI's Structured Data Endpoints cover popular sites like Amazon, Google, and Walmart. Apify's marketplace covers 20,000+ websites and use cases. If there is a site you need to scrape, there is likely an Actor for it.

The trade-off: community-maintained Actors vary in quality and maintenance status. Some are well-maintained; others may be outdated when target sites change their structure.

Full browser automation and AI agents

Beyond scraping, Apify supports full browser automation via Playwright and Puppeteer. This enables workflows that go beyond data extraction: form filling, interaction sequences, and complex multi-step flows that ScraperAPI's rendering mode cannot handle.

Apify also offers dedicated AI agent infrastructure, making it a stronger option than ScraperAPI's LangChain integration for complex automated workflows.

Crawlee: open-source SDK

Apify maintains Crawlee, an open-source Python and JavaScript crawling library. If you want to build custom scrapers using Apify's infrastructure without the marketplace, Crawlee gives you that foundation with full control.

Key features

- 20,000+ ready-made Actors for popular websites and use cases

- Full Playwright and Puppeteer browser automation

- AI agent infrastructure for complex multi-step workflows

- Crawlee open-source SDK for custom scrapers

- Built-in scheduling, monitoring, and cloud storage

- Integrations with Zapier, Make, and AI frameworks

Pricing

Apify uses a compute-unit model: Free ($0 with $5 credit), Starter ($29/month), Scale ($199/month), Business ($999/month). Compute unit rates decrease at higher tiers. Actor Marketplace access is billed separately per Actor.

When to choose Apify over ScraperAPI

Choose Apify if you:

- Need to scrape a wide variety of sites beyond ScraperAPI's 20+ SDEs

- Want full browser automation beyond headless rendering

- Need AI agent capabilities for complex, multi-step workflows

- Prefer a free tier to test before committing

- Want an open-source SDK (Crawlee) for building custom scrapers

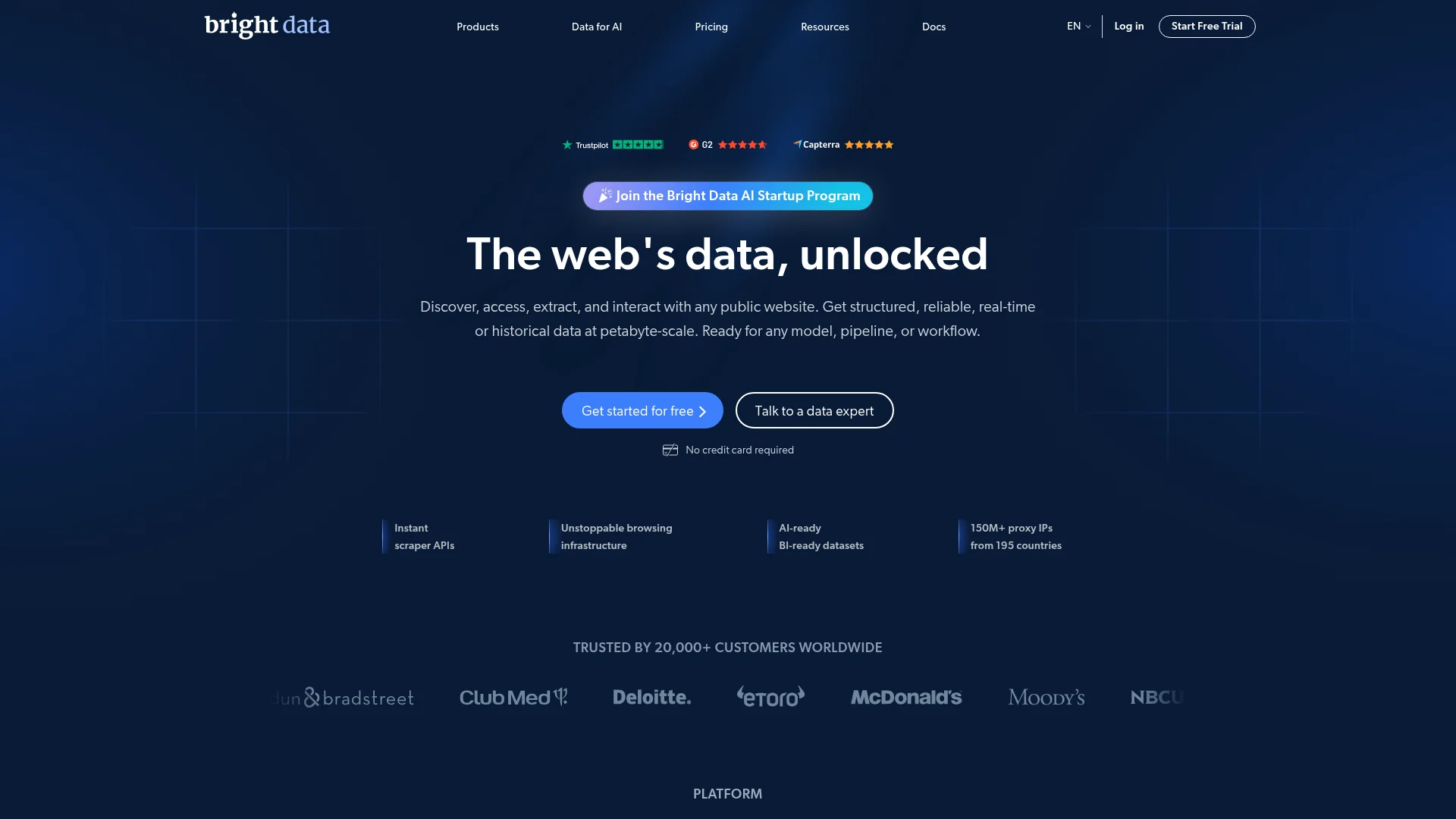

4. Bright Data - Enterprise proxy infrastructure with web scraping APIs

Bright Data is an enterprise-grade data platform combining the world's largest residential proxy network (150M+ IPs across 195 countries) with a suite of web scraping APIs, AI tools, and ready-made datasets. For teams with serious scale and reliability requirements, it sits above ScraperAPI in terms of infrastructure depth.

How Bright Data differs from ScraperAPI

| Feature | ScraperAPI | Bright Data |

|---|---|---|

| Wins on | Simpler pricing, lower entry point, easy setup for most sites | 150M+ IP pool, Web Unlocker, AI Scraper Studio, Dataset Marketplace |

| Proxy network | 40M+ proxies, 50+ countries | 150M+ IPs, 195 countries |

| Complex site handling | Automatic proxy management | Web Unlocker: auto-selects proxies, rendering, and retry logic |

| No-code scrapers | DataPipeline | 120+ pre-built scrapers (SERP, e-commerce, social) |

| AI tools | LangChain integration | AI Scraper Studio (turns websites into data pipelines) |

| Dataset marketplace | Not offered | Pre-collected datasets across major domains |

| Proxy types | Standard proxy rotation | Residential, datacenter, ISP, and mobile IPs |

| Pricing model | Per-request credits | Custom/enterprise (pay-per-GB for proxies) |

| Free trial | 5,000 free API calls | $5 free credit |

Web Unlocker: automatic site access configuration

Bright Data's Web Unlocker automatically determines whether residential proxies, JavaScript rendering, or specific retry logic is needed for each URL. You send a request and receive the data without manually selecting proxy types or tuning premium parameters.

This is more hands-off than ScraperAPI's approach, where using premium parameters (and paying the extra credits for them) requires you to know which sites need them upfront.

AI Scraper Studio

Bright Data's AI Scraper Studio lets you define what data you want in plain English, then builds a data pipeline for you. It is their answer to AI-native scraping, designed for teams feeding data into ML models or large-scale analytics.

150M+ IP proxy network

ScraperAPI has 40M+ proxies across 50+ countries. Bright Data operates 150M+ IPs across 195 countries with residential, datacenter, ISP, and mobile proxy types. For heavily geo-restricted content or sites that actively block datacenter IPs, Bright Data's network provides more headroom.

Dataset Marketplace

Bright Data offers a Marketplace of pre-collected datasets across e-commerce, social media, finance, and more. Instead of scraping data yourself, you can buy ready-to-download datasets for immediate use. ScraperAPI does not offer a dataset marketplace.

Key features

- 150M+ residential, datacenter, ISP, and mobile IPs across 195 countries

- Web Unlocker (automatic proxy selection and rendering)

- 120+ pre-built no-code scrapers for SERP, e-commerce, and social media

- AI Scraper Studio for building data pipelines with natural language

- Dataset Marketplace for ready-to-use pre-collected data

- Browsing Infrastructure (Scraping Browser) compatible with Playwright and Puppeteer

Pricing

Bright Data uses custom, enterprise pricing for most products. Residential proxies start at approximately $8.4/GB. Web Scraper API and Scraping Browser pricing is usage-based. A $5 free credit is available to test. See Bright Data's pricing page for current rates.

When to choose Bright Data over ScraperAPI

Choose Bright Data if you:

- Need a larger proxy pool (150M+ vs 40M+) with broader country coverage

- Want automatic site access configuration via Web Unlocker without managing proxy types yourself

- Are running enterprise-scale scraping where success rates are critical

- Need ready-made datasets without scraping at all

- Want a visual AI Scraper Studio for building pipelines

- Need Playwright/Puppeteer-compatible browser infrastructure

For a broader comparison of Bright Data against other scraping API alternatives — ScrapingBee, Apify, and Scrape.do — see the Bright Data alternatives guide.

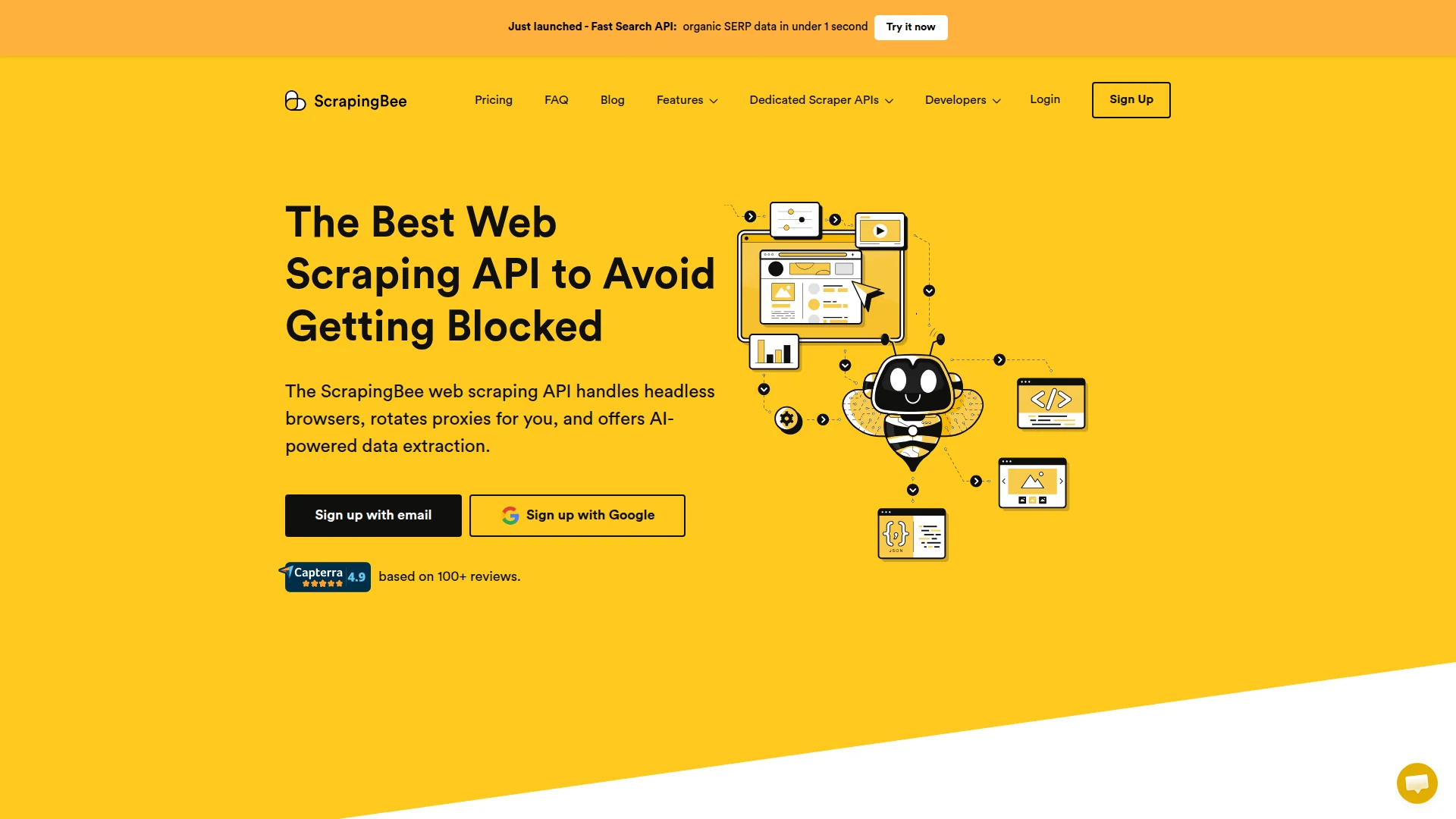

5. ScrapingBee - Developer-friendly scraping API with fast SERP access

ScrapingBee is a web scraping API that handles proxies and headless browsers, with a particular focus on developer experience and a fast Search Engine Results Page (SERP) API that returns organic data in under one second. It is a simpler, more accessible alternative to ScraperAPI for teams that do not need site-specific structured endpoints.

How ScrapingBee differs from ScraperAPI

| Feature | ScraperAPI | ScrapingBee |

|---|---|---|

| Wins on | Structured Data Endpoints (Amazon, Google, Walmart), DataPipeline, async at massive scale | Faster SERP API (under 1 second), AI extraction, Google cache support, more credits at entry price |

| Structured endpoints | 20+ dedicated site-specific scrapers | Not offered |

| SERP API | Standard scraping of search result pages | Organic SERP data in under 1 second |

| AI extraction | Not offered | Natural language extraction rules |

| Google cache | Not mentioned | Supported |

| JavaScript actions | Headless rendering | Click, scroll, custom JS execution |

| Screenshots | Not mentioned | Full-page and partial screenshots |

| DataPipeline | Visual no-code pipeline | Not offered |

| Starting price | $49/month (100,000 requests) | $49/month (250,000 credits) |

Fast SERP API

ScrapingBee's SERP API returns organic search results in under one second, which is significantly faster than scraping Google search pages through a standard proxy API. For teams monitoring SEO rankings, tracking competitor content, or building search-driven products, this is a meaningful differentiator.

AI extraction in plain English

ScrapingBee supports natural language extraction rules: describe what data you want, and the API extracts it without CSS selectors. This reduces maintenance overhead when target sites change their structure and makes it accessible to developers who are not comfortable writing XPath or CSS.

JavaScript interaction support

ScrapingBee supports JavaScript scenarios, letting you define click, scroll, and custom JavaScript execution as part of a scrape. This enables scraping interactive pages that require user interaction before data becomes visible, going beyond ScraperAPI's standard headless rendering.

Key features

- Fast SERP API (organic search results in under 1 second)

- AI-powered extraction using plain English rules

- JavaScript rendering with click, scroll, and custom JS scenario support

- Full-page and partial screenshots

- Google cache support for historical data

- Proxy rotation with residential and datacenter options

- SDKs for Python, Node.js, PHP, Ruby, and more

Pricing

| Plan | Monthly Price | Credits Included |

|---|---|---|

| Freelance | $49 | 250,000 |

| Startup | $99 | 1,000,000 |

| Business | $249 | 3,000,000 |

| Business+ | $599 | 8,000,000 |

Note: JavaScript rendering costs 5 credits per request instead of 1.

When to choose ScrapingBee over ScraperAPI

Choose ScrapingBee if you:

- Need fast SERP data (under 1 second) without heavy infrastructure

- Want AI-powered extraction without writing CSS selectors

- Need JavaScript interaction support (click, scroll) for dynamic pages

- Are starting a new project and want more credits at the same entry price (250K vs 100K at $49)

- Do not need dedicated structured endpoints for specific sites like Amazon or Walmart

Which ScraperAPI alternative should you choose?

The right choice depends on your primary use case.

Building AI or LLM applications: Firecrawl is purpose-built for this. LLM-ready markdown output, native MCP server, autonomous agent mode, and open-source flexibility make it the clear choice.

Need managed data delivery (someone else handles scraping): Zyte's Managed Data service is the standout option here. They combine their platform with a delivery team so you can focus on using the data, not building the pipeline.

Wide variety of sites to scrape: Apify's 20,000+ actor marketplace covers far more ground than any structured endpoint list. Useful when the sites you need are not among ScraperAPI's 20+ SDEs.

Enterprise scale with complex, large-scale requirements: Bright Data's 150M+ IP network and Web Unlocker handle the toughest targets at the largest volumes.

Fast SERP data and a clean developer API: ScrapingBee delivers sub-second search results and natural language extraction at a competitive entry price.

ScraperAPI's Amazon/Google/Walmart SDEs at massive async scale: ScraperAPI still wins for teams heavily invested in those specific pipelines with volume requirements.

However, in 2026, your web extraction pipeline needs to be AI and agent ready. That's where Firecrawl beats all other solutions. Our guide to choosing web scraping tools can help you evaluate the right fit for your stack.

Start scraping with Firecrawl's free tier and see the difference for AI-powered data extraction.

Frequently Asked Questions

Is ScraperAPI good for web scraping?

ScraperAPI works well for large-scale data collection on specific high-value sites like Amazon, Google, and Walmart via its Structured Data Endpoints. It handles proxy management, JavaScript rendering, and page processing automatically. For teams building AI applications or needing LLM-ready output, alternatives like Firecrawl may be a better fit.

Why do users look for ScraperAPI alternatives?

Users commonly cite rising credit costs, especially when using premium parameters or scraping complex sites that consume 5 to 25 credits per request. Others mention limited support responsiveness and a black-box approach that gives little visibility into what is happening behind the scenes.

What's the cheapest alternative to ScraperAPI?

Firecrawl offers a free tier (1,000 credits per month) and paid plans starting at $16/month for 5,000 credits, priced at 1 credit per successful page. ScrapingBee starts at $49/month for 250,000 credits, though JavaScript rendering costs 5 credits per request.

Does Firecrawl replace ScraperAPI?

Firecrawl and ScraperAPI serve different primary use cases. ScraperAPI excels at large-scale scraping of specific high-value sites with dedicated Structured Data Endpoints. Firecrawl is purpose-built for AI and LLM applications, delivering clean markdown and structured JSON with full-site crawling, site mapping, and an autonomous agent endpoint. If you need AI-ready data, Firecrawl is a strong replacement.

Can I use ScraperAPI for small projects?

Yes. ScraperAPI offers 5,000 free API calls to get started. Their Hobby plan is $49/month for 100,000 requests. For very small or variable-usage projects, Firecrawl's free tier (1,000 credits per month) and pay-as-you-grow model may offer more flexibility.

Which ScraperAPI alternative has the best AI and LLM support?

Firecrawl is purpose-built for AI workflows. It outputs LLM-ready markdown natively, integrates with LangChain and LlamaIndex, offers a native MCP server and CLI skill, and has an autonomous /agent endpoint that browses the web with plain English prompts.